An introduction to the use of Bayes' rule in statistics. If you are interested in seeing more of the material, arranged into a playlist, please visit: https://www.youtube.com/playlist?list=PLFDbGp5YzjqXQ4oE4w9GVWdiokWB9gEpm Unfortunately, Ox Educ is no more. Don't fret however as a whol

From playlist Bayesian statistics: a comprehensive course

Think more rationally with Bayes’ rule | Steven Pinker

The formula for rational thinking explained by Harvard professor Steven Pinker. Subscribe to Big Think on YouTube ► https://www.youtube.com/channel/UCvQECJukTDE2i6aCoMnS-Vg?sub_confirmation=1 Up next, The war on rationality ► https://youtu.be/qdzNKQwkp-Y In his explanation of Bayes' theo

From playlist Get smarter, faster

(ML 12.4) Bayesian model selection

Approaches to model selection from a Bayesian perspective: Bayesian model averaging (BMA), "Type II MAP", and Type II Maximum Likelihood (a.k.a. ML-II, a.k.a. the evidence approximation, a.k.a. empirical Bayes).

From playlist Machine Learning

Christine Keribin: Variational Bayes methods and algorithms - Part 1

Abstract: Bayesian posterior distributions can be numerically intractable, even by the means of Markov Chain Monte Carlo methods. Bayesian variational methods can then be used to compute directly (and fast) a deterministic approximation of these posterior distributions. In this course, I d

From playlist Probability and Statistics

Conditional Probability: Bayes’ Theorem – Disease Testing (Table and Formula)

This video shows how to determine conditional probability using a table and using Bayes' theorem. @mathipower4u

From playlist Probability

6 - Bayes' rule in inference - likelihood

Provides an introduction to Bayesian statistics - in particular the likelihood - by running through a simple example of the application of Bayes' rule to the case of inference over a binary parameter, If you are interested in seeing more of the material, arranged into a playlist, please v

From playlist Bayesian statistics: a comprehensive course

Bayes Factors: A ‘re-volution’ in psychology, Geoff Patching - Bayes@Lund 2018

Find more info about Bayes@Lund, including slides, here: https://bayesat.github.io/lund2018/bayes_at_lund_2018.html

From playlist Bayes@Lund 2018

Mean field asymptotics in high-dimensional statistics – A. Montanari – ICM2018

Probability and Statistics Invited Lecture 12.16 Mean field asymptotics in high-dimensional statistics: From exact results to efficient algorithms Andrea Montanari Abstract: Modern data analysis challenges require building complex statistical models with massive numbers of parameters. It

From playlist Probability and Statistics

Variational Bayes: An Overview and Risk-Sensitive Formulations by Harsha Honnappa

PROGRAM: ADVANCES IN APPLIED PROBABILITY ORGANIZERS: Vivek Borkar, Sandeep Juneja, Kavita Ramanan, Devavrat Shah, and Piyush Srivastava DATE & TIME: 05 August 2019 to 17 August 2019 VENUE: Ramanujan Lecture Hall, ICTS Bangalore Applied probability has seen a revolutionary growth in resear

From playlist Advances in Applied Probability 2019

7 Bayes' rule in inference the prior and denominator

This provides a short introduction into the use of Bayes' rule in inference, by going through an example where the prior and denominator in the formula are explained. If you are interested in seeing more of the material, arranged into a playlist, please visit: https://www.youtube.com/play

From playlist Bayesian statistics: a comprehensive course

Daniel Yekutieli: Hierarchical Bayes Modeling for Large-Scale Inference

CIRM VIRTUAL EVENT Recorded during the meeting "Mathematical Methods of Modern Statistics 2" the June 03, 2020 by the Centre International de Rencontres Mathématiques (Marseille, France) Filmmaker: Guillaume Hennenfent Find this video and other talks given by worldwide mathematicians

From playlist Virtual Conference

05-5 Inverse modeling : sequential importance re-sampling

Introduction to sequential importance resampling

From playlist QUSS GS 260

Bayes Formula Explained with Examples

In this video i'm gonna try to explain Bayes formula. Timestamps: 00:00 - Where does Bayes formula come from? 03:33 - Bayes formula with 3 variables 04:05 - The law of total probability 05:06 - Disease example 08:31 - Coin flip examples

From playlist Summer of Math Exposition Youtube Videos

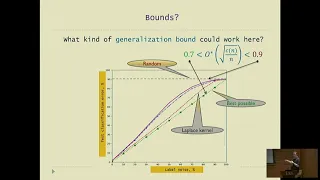

From Classical Statistics to Modern ML: the Lessons of Deep Learning - Mikhail Belkin

Workshop on Theory of Deep Learning: Where next? Topic: From Classical Statistics to Modern ML: the Lessons of Deep Learning Speaker: Mikhail Belkin Affiliation: Ohio State University Date: October 16, 2019 For more video please visit http://video.ias.edu

From playlist Mathematics

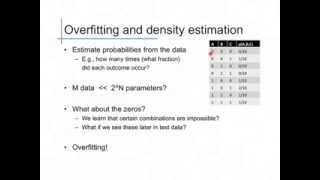

Bayes Classifiers (2): Naive Bayes

Complexity and overfitting in Bayes classifiers; naive Bayes models

From playlist cs273a

Naive Bayes Classifier in Python | Naive Bayes Algorithm | Machine Learning Algorithm | Edureka

** Machine Learning Training with Python: https://www.edureka.co/data-science-python-certification-course ** This Edureka video will provide you with a detailed and comprehensive knowledge of Naive Bayes Classifier Algorithm in python. At the end of the video, you will learn from a demo ex

From playlist Machine Learning Algorithms in Python (With Demo) | Edureka

(ML 3.6) The Big Picture (part 2)

How the core concepts and methods in machine learning arise naturally in the course of solving the decision theory problem. A playlist of these Machine Learning videos is available here: http://www.youtube.com/my_playlists?p=D0F06AA0D2E8FFBA

From playlist Machine Learning

A short derivation of Bayes' rule is given here. If you are interested in seeing more of the material, arranged into a playlist, please visit: https://www.youtube.com/playlist?list=PLFDbGp5YzjqXQ4oE4w9GVWdiokWB9gEpm Unfortunately, Ox Educ is no more. Don't fret however as a whole load o

From playlist Bayesian statistics: a comprehensive course

Bayesian optimisation for likelihood-free cosmological (...) - Leclercq - Workshop 2 - CEB T3 2018

Leclercq (Imperial College) / 22.10.2018 Bayesian optimisation for likelihood-free cosmological inference ---------------------------------- Vous pouvez nous rejoindre sur les réseaux sociaux pour suivre nos actualités. Facebook : https://www.facebook.com/InstitutHenriPoincare/ Twitter

From playlist 2018 - T3 - Analytics, Inference, and Computation in Cosmology

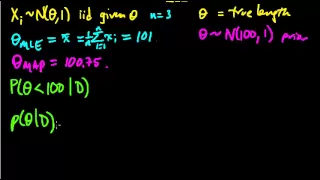

(ML 7.1) Bayesian inference - A simple example

Illustration of the main idea of Bayesian inference, in the simple case of a univariate Gaussian with a Gaussian prior on the mean (and known variances).

From playlist Machine Learning