35 - Normal prior and likelihood - posterior predictive distribution

This video provides a derivation of the normal posterior predictive distribution for the case of a normal prior distribution and likelihood. If you are interested in seeing more of the material, arranged into a playlist, please visit: https://www.youtube.com/playlist?list=PLFDbGp5YzjqXQ4o

From playlist Bayesian statistics: a comprehensive course

29 - Posterior predictive distribution: example Disease

This video provides an introduction to the concept of posterior predictive distributions, using the example of disease prevalence in a population. Here we consider the case of a beta prior and binomial likelihood; resulting in a beta-binomial posterior. If you are interested in seeing mo

From playlist Bayesian statistics: a comprehensive course

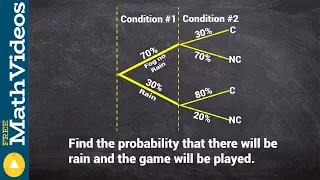

Learn to find the or probability from a tree diagram

👉 Learn how to find the conditional probability of an event. Probability is the chance of an event occurring or not occurring. The probability of an event is given by the number of outcomes divided by the total possible outcomes. Conditional probability is the chance of an event occurring

From playlist Probability

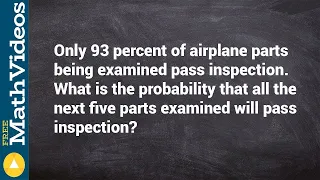

How to find the probability of consecutive events

👉 Learn how to find the conditional probability of an event. Probability is the chance of an event occurring or not occurring. The probability of an event is given by the number of outcomes divided by the total possible outcomes. Conditional probability is the chance of an event occurring

From playlist Probability

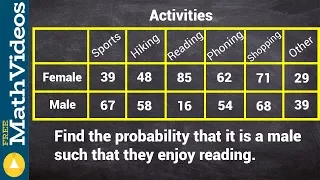

Finding the conditional probability from a two way frequency table

👉 Learn how to find the conditional probability of an event. Probability is the chance of an event occurring or not occurring. The probability of an event is given by the number of outcomes divided by the total possible outcomes. Conditional probability is the chance of an event occurring

From playlist Probability

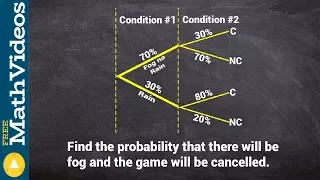

Finding the conditional probability from a tree diagram

👉 Learn how to find the conditional probability of an event. Probability is the chance of an event occurring or not occurring. The probability of an event is given by the number of outcomes divided by the total possible outcomes. Conditional probability is the chance of an event occurring

From playlist Probability

Ex: Determine Conditional Probability from a Table

This video provides two examples of how to determine conditional probability using information given in a table.

From playlist Probability

(New Version Available) Conditional Probability

New Version: Fixes an error at 7:00: https://youtu.be/WgsxhWPAo4c This video explains how to determine conditional probability. http://mathispower4u.yolasite.com/

From playlist Counting and Probability

How to find the conditional probability from a tree diagram

👉 Learn how to find the conditional probability of an event. Probability is the chance of an event occurring or not occurring. The probability of an event is given by the number of outcomes divided by the total possible outcomes. Conditional probability is the chance of an event occurring

From playlist Probability

DeepMind x UCL | Deep Learning Lectures | 11/12 | Modern Latent Variable Models

This lecture, by DeepMind Research Scientist Andriy Mnih, explores latent variable models, a powerful and flexible framework for generative modelling. After introducing this framework along with the concept of inference, which is central to it, Andriy focuses on two types of modern latent

From playlist Learning resources

18. Bayesian Statistics (cont.)

MIT 18.650 Statistics for Applications, Fall 2016 View the complete course: http://ocw.mit.edu/18-650F16 Instructor: Philippe Rigollet In this lecture, Prof. Rigollet talked about Bayesian confidence regions and Bayesian estimation. License: Creative Commons BY-NC-SA More information at

From playlist MIT 18.650 Statistics for Applications, Fall 2016

Machine learning - Importance sampling and MCMC I

Importance sampling and Markov chain Monte Carlo (MCMC). Application to logistic regression. Slides available at: http://www.cs.ubc.ca/~nando/540-2013/lectures.html Course taught in 2013 at UBC by Nando de Freitas

From playlist Machine Learning 2013

Bayesian Statistics: An Introduction

See all my videos here: http://www.zstatistics.com/videos/ 0:00 Introduction 2:25 Frequentist vs Bayesian 5:55 Bayes Theorum 10:45 Visual Example 15:05 Bayesian Inference for a Normal Mean 24:30 Conjugate priors 32:55 Credible Intervals

From playlist Statistical Inference (7 videos)

Kerrie Mengersen: Bayesian Modelling

Abstract: This tutorial will be a beginner’s introduction to Bayesian statistical modelling and analysis. Simple models and computational tools will be described, followed by a discussion about implementing these approaches in practice. A range of case studies will be presented and possibl

From playlist Probability and Statistics

DSI Seminar | Adaptive Contraction Rates and Model Selection Consistency of Variational Posteriors

In this DSI Seminar Series talk from June 2021, University of Notre Dame associate professor Lizhen Li discusses adaptive inference based on variational Bayes. Abstract: We propose a novel variational Bayes framework called adaptive variational Bayes, which can operate on a collection of

From playlist DSI Virtual Seminar Series

SLT Supplemental - Seminar 2 - Markov Chain Monte Carlo

This series provides supplemental mathematical background material for the seminar on Singular Learning Theory. In this seminar Liam Carroll introduces us to Markov Chain Monte Carlo, a method for sampling from the Bayesian posterior. The webpage for this seminar is http://metauni.org/pos

From playlist Metauni

O'Reilly Webcast: Bayesian Statistics Made Simple

Join Allen Downey, author of Think Stats: Probability and Statistics for Programmers for an introduction to Bayesian statistics using Python. Bayesian statistical methods are becoming more common and more important, but there are not many resources to help beginners get started. People who

From playlist O'Reilly Webcasts 2

Statistical Rethinking 2022 Lecture 02 - Bayesian Inference

Bayesian updating, sampling posterior distributions, computing posterior and prior predictive distributions Course materials: https://github.com/rmcelreath/stat_rethinking_2022 Intro music: https://www.youtube.com/watch?v=QH_VKWStK98 Chapters: 00:00 Introduction 04:53 Garden of forking

From playlist Statistical Rethinking 2022

Using a contingency table to find the conditional probability

👉 Learn how to find the conditional probability of an event. Probability is the chance of an event occurring or not occurring. The probability of an event is given by the number of outcomes divided by the total possible outcomes. Conditional probability is the chance of an event occurring

From playlist Probability