Introduction to Regression Analysis

This video introduced analysis and discusses how to determine if a given regression equation is a good model using r and r^2.

From playlist Performing Linear Regression and Correlation

Linear regression is used to compare sets or pairs of numerical data points. We use it to find a correlation between variables.

From playlist Learning medical statistics with python and Jupyter notebooks

Can You Validate These Emails?

Email Validation is a procedure that verifies if an email address is deliverable and valid. Can you validate these emails?

From playlist Fun

What are the different ways to perform data validation in machine learning?

#machinelearning #shorts #datascience

From playlist Quick Machine Learning Concepts

An Introduction to Linear Regression Analysis

Tutorial introducing the idea of linear regression analysis and the least square method. Typically used in a statistics class. Playlist on Linear Regression http://www.youtube.com/course?list=ECF596A4043DBEAE9C Like us on: http://www.facebook.com/PartyMoreStudyLess Created by David Lon

From playlist Linear Regression.

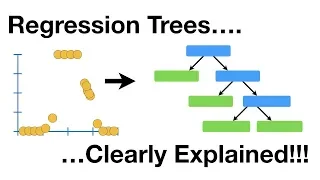

Regression Trees, Clearly Explained!!!

Regression Trees are one of the fundamental machine learning techniques that more complicated methods, like Gradient Boost, are based on. They are useful for times when there isn't an obviously linear relationship between what you want to predict, and the things you are using to make the p

From playlist StatQuest

Regression: Model Selection and Validation, Part 1

Data Science for Biologists Regression: Model Selection and Validation Part 1 Course Website: data4bio.com Instructors: Nathan Kutz: faculty.washington.edu/kutz Bing Brunton: faculty.washington.edu/bbrunton Steve Brunton: faculty.washington.edu/sbrunton

From playlist Data Science for Biologists

Frank Noé: "Intro to Machine Learning (Part 1/2)"

Watch part 2/2 here: https://youtu.be/7TZnGQrNF6g Machine Learning for Physics and the Physics of Learning Tutorials 2019 "Intro to Machine Learning (Part 1/2)" Frank Noé, Freie Universität Berlin Institute for Pure and Applied Mathematics, UCLA September 5, 2019 For more information:

From playlist Machine Learning for Physics and the Physics of Learning 2019

Deep Learning Lecture 1.2 - Intro Shallow ML

Deep Learning Lecture Intro: - Shallow ML - Learning Problem - Linear Least Squares - Hyperparameter Selection

From playlist Deep Learning Lecture

Assumptions and Conditions for Regression

From playlist Regression Analysis

Frank Noé: "Fundamentals of Artificial Intelligence and Machine Learning" (Part 1/2)

Watch part 2/2 here: https://youtu.be/gSLQ_2uFSiA Mathematical Challenges and Opportunities for Autonomous Vehicles Tutorials 2020 "Fundamentals of Artificial Intelligence and Machine Learning" (Part 1/2) Frank Noé - Freie Universität Berlin Institute for Pure and Applied Mathematics, U

From playlist Mathematical Challenges and Opportunities for Autonomous Vehicles 2020

Statistical Learning: 6.R.4 Ridge Regression and Lasso

Statistical Learning, featuring Deep Learning, Survival Analysis and Multiple Testing You are able to take Statistical Learning as an online course on EdX, and you are able to choose a verified path and get a certificate for its completion: https://www.edx.org/course/statistical-learning

From playlist Statistical Learning

From playlist Thinking about Data

Regularization Part 1: Ridge (L2) Regression

Ridge Regression is a neat little way to ensure you don't overfit your training data - essentially, you are desensitizing your model to the training data. It can also help you solve unsolvable equations, and if that isn't bad to the bone, I don't know what is. This StatQuest follows up on

From playlist StatQuest

Applied ML 2020 - 10 - Calibration, Imbalanced data

Class materials at https://www.cs.columbia.edu/~amueller/comsw4995s20/schedule/

From playlist Applied Machine Learning 2020

Selecting the best model in scikit-learn using cross-validation

In this video, we'll learn about K-fold cross-validation and how it can be used for selecting optimal tuning parameters, choosing between models, and selecting features. We'll compare cross-validation with the train/test split procedure, and we'll also discuss some variations of cross-vali

From playlist Machine learning in Python with scikit-learn

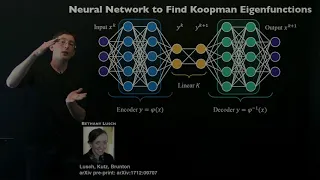

Koopman Spectral Analysis (Representations)

In this video, we explore how to obtain finite-dimensional representations of the Koopman operator from data, using regression. This includes the use of sparse regression and neural networks, and highlights the importance of cross-validating. https://www.eigensteve.com/

From playlist Koopman Analysis

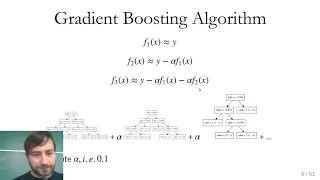

Applied Machine Learning 2019 - Lecture 09 - Gradient boosting; Calibration

Gradient boosting and "extreme" gradient boosting Calibration curves and calibrating classifiers with CalibratedClassifierCV. Class website with slides and more materials: https://www.cs.columbia.edu/~amueller/comsw4995s19/schedule/

From playlist Applied Machine Learning - Spring 2019