Vector Auto Regression : Time Series Talk

Let's take a look at the basics of the vector auto regression model in time series analysis! --- Like, Subscribe, and Hit that Bell to get all the latest videos from ritvikmath ~ --- Check out my Medium: https://medium.com/@ritvikmathematics

From playlist Time Series Analysis

Vectors | Lecture 1 | Vector Calculus for Engineers

Defines vectors, vector addition and vector subtraction. Join me on Coursera: https://www.coursera.org/learn/vector-calculus-engineers Lecture notes at http://www.math.ust.hk/~machas/vector-calculus-for-engineers.pdf Subscribe to my channel: http://www.youtube.com/user/jchasnov?sub_con

From playlist Vector Calculus for Engineers

In this second part on Motion, we take a look at calculating the velocity and position vectors when given the acceleration vector and initial values for velocity and position. It involves as you might imagine some integration. Just remember that when calculating the indefinite integral o

From playlist Life Science Math: Vectors

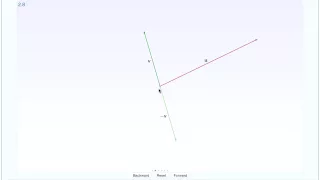

This shows an interactive illustration that shows vector subtraction. The clip is from the book "Immersive Linear Algebra" at http://www.immersivemath.com.

From playlist Chapter 2 - Vectors

Vector Calculus 1: What Is a Vector?

https://bit.ly/PavelPatreon https://lem.ma/LA - Linear Algebra on Lemma http://bit.ly/ITCYTNew - Dr. Grinfeld's Tensor Calculus textbook https://lem.ma/prep - Complete SAT Math Prep

From playlist Vector Calculus

Transformers are RNNs: Fast Autoregressive Transformers with Linear Attention (Paper Explained)

#ai #attention #transformer #deeplearning Transformers are famous for two things: Their superior performance and their insane requirements of compute and memory. This paper reformulates the attention mechanism in terms of kernel functions and obtains a linear formulation, which reduces th

From playlist Papers Explained

This shows an small game that illustrates the concept of a vector. The clip is from the book "Immersive Linear Algebra" at http://www.immersivemath.com

From playlist Chapter 2 - Vectors

Autoregressive Models: The Yule-Walker Equations

http://AllSignalProcessing.com for more great signal processing content, including concept/screenshot files, quizzes, MATLAB and data files. The Yule-Walker equations relate the auto covariance of a random signal to the autoregressive (AR) model parameters. They can be used to estimate A

From playlist Random Signal Characterization

Statistical Learning: 10.R.4 Recurrent Neural Networks

Statistical Learning, featuring Deep Learning, Survival Analysis and Multiple Testing You are able to take Statistical Learning as an online course on EdX, and you are able to choose a verified path and get a certificate for its completion: https://www.edx.org/course/statistical-learning

From playlist Statistical Learning

SimVLM explained | What the paper doesn’t tell you

📜SimVLM explained. What the authors tell us, what they don’t tell us and how this all works. Enjoy with coffee! 📺 Vision & Language Transformer explained (ViLBERT): https://youtu.be/dd7nE4nbxN0 📺 ViT explained: https://youtu.be/DVoHvmww2lQ Thanks to our Patrons who support us in Tier 2, 3

From playlist Ms. Coffee Bean's Multimodalities

Linear Transformers Are Secretly Fast Weight Memory Systems (Machine Learning Paper Explained)

#fastweights #deeplearning #transformers Transformers are dominating Deep Learning, but their quadratic memory and compute requirements make them expensive to train and hard to use. Many papers have attempted to linearize the core module: the attention mechanism, using kernels - for examp

From playlist Papers Explained

Time Series class: Part 1 - Dr Ioannis Papastathopoulos, University of Edinburgh

Part 2: https://youtu.be/7n0HTtThMe0 Introduction: Moving average, Autoregressive and ARMA models. Parameter estimation, likelihood based inference and forecasting with time series. Advanced: State-space models (hidden Markov models, Kalman filter) and applications. Recurrent neural netw

From playlist Data science classes

The vector cross-product is another form of vector multiplication and results in another vector. In this tutorial I show you a simple way of calculating the cross product of two vectors.

From playlist Introducing linear algebra

Linear Algebra for Computer Scientists. 1. Introducing Vectors

This computer science video is one of a series on linear algebra for computer scientists. This video introduces the concept of a vector. A vector is essentially a list of numbers that can be represented with an array or a function. Vectors are used for data analysis in a wide range of f

From playlist Linear Algebra for Computer Scientists

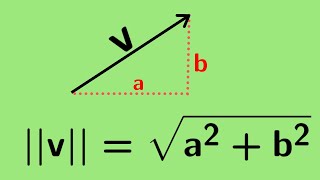

Multivariable Calculus | The notion of a vector and its length.

We define the notion of a vector as it relates to multivariable calculus and define its length. http://www.michael-penn.net http://www.randolphcollege.edu/mathematics/

From playlist Vectors for Multivariable Calculus

Graham Taylor: "Learning Representations of Sequences"

Graduate Summer School 2012: Deep Learning, Feature Learning "Learning Representations of Sequences" Graham Taylor, University of Guelph Institute for Pure and Applied Mathematics, UCLA July 13, 2012 For more information: https://www.ipam.ucla.edu/programs/summer-schools/graduate-summer

From playlist GSS2012: Deep Learning, Feature Learning

Autoregressive Diffusion Models (Machine Learning Research Paper Explained)

#machinelearning #ardm #generativemodels Diffusion models have made large advances in recent months as a new type of generative models. This paper introduces Autoregressive Diffusion Models (ARDMs), which are a mix between autoregressive generative models and diffusion models. ARDMs are t

From playlist Papers Explained

Time Series Forecasting 8 : Vector Autoregression

In this video I cover Vector Autoregressions. Vector autoregressive models are used when you want to predict multiple time series using one model. With them we have to check for stationarity. Convert the data to a stationary form. Then I'll show you how to invert stationarity back to the o

From playlist Time Series Analysis

Understand Vector-Quantized Variational Autoencoder (VQ-VAE) for Image Generation #stablediffusion

Vector-Quantized Variational Autoencoder (VQ-VAE) for Image Generation explained in Detail. The main component of DALL-E of 2020. On the road to DIFFUSION for text-to-video. Generative AI. 3 videos before this video: https://youtu.be/onuIy6PC1ic https://youtu.be/BHzU-jq-AcM https://youtu.

From playlist Stable Diffusion / Latent Diffusion models for Text-to-Image AI