The lecture was held within the framework of the Hausdorff Trimester Program "Dynamics: Topology and Numbers": Conference on “Transfer operators in number theory and quantum chaos” Abstract: In many classical compact settings, entropy is upper semicontinuous, i.e., given a con

From playlist Conference: Transfer operators in number theory and quantum chaos

Entropy is often taught as a measure of how disordered or how mixed up a system is, but this definition never really sat right with me. How is "disorder" defined and why is one way of arranging things any more disordered than another? It wasn't until much later in my physics career that I

From playlist Thermal Physics/Statistical Physics

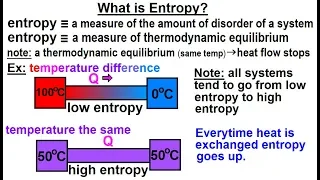

Physics - Thermodynamics 2: Ch 32.7 Thermo Potential (10 of 25) What is Entropy?

Visit http://ilectureonline.com for more math and science lectures! In this video explain and give examples of what is entropy. 1) entropy is a measure of the amount of disorder (randomness) of a system. 2) entropy is a measure of thermodynamic equilibrium. Low entropy implies heat flow t

From playlist PHYSICS 32.7 THERMODYNAMIC POTENTIALS

Physics - Thermodynamics 2: Ch 32.7 Thermo Potential (11 of 25) Change in Entropy: 1st Method

Visit http://ilectureonline.com for more math and science lectures! In this video calculate the change in entropy of the initial state of low entropy system to a high entropy system. (Method 1) Next video in this series can be seen at: https://youtu.be/YSi67zdKJIw

From playlist PHYSICS 32.7 THERMODYNAMIC POTENTIALS

Maxwell-Boltzmann distribution

Entropy and the Maxwell-Boltzmann velocity distribution. Also discusses why this is different than the Bose-Einstein and Fermi-Dirac energy distributions for quantum particles. My Patreon page is at https://www.patreon.com/EugeneK 00:00 Maxwell-Boltzmann distribution 02:45 Higher Temper

From playlist Physics

Entropy production during free expansion of an ideal gas by Subhadip Chakraborti

Abstract: According to the second law, the entropy of an isolated system increases during its evolution from one equilibrium state to another. The free expansion of a gas, on removal of a partition in a box, is an example where we expect to see such an increase of entropy. The constructi

From playlist Seminar Series

Physics - Thermodynamics: (1 of 5) Entropy - Basic Definition

Visit http://ilectureonline.com for more math and science lectures! In this video I will explain and help you understand entropy.

From playlist PHYSICS - THERMODYNAMICS

Physics - Thermodynamics 2: Ch 32.7 Thermo Potential (12 of 25) Change in Entropy: 2nd Method***

Visit http://ilectureonline.com for more math and science lectures! In this video calculate the change in entropy of the initial state of low entropy system to a high entropy system. (Method 2) Next video in this series can be seen at:

From playlist PHYSICS 32.7 THERMODYNAMIC POTENTIALS

Erik Bollt - Identify Interactions in Complex Networked Dynamical Systems through Causation Entropy

Recorded 30 August 2022. Erik Bollt of Clarkson University Math/ECE presents "Identifying Interactions in Complex Networked Dynamical Systems through Causation Entropy" at IPAM's Reconstructing Network Dynamics from Data: Applications to Neuroscience and Beyond. Abstract: Inferring the cou

From playlist 2022 Reconstructing Network Dynamics from Data: Applications to Neuroscience and Beyond

David Sutter: "A chain rule for the quantum relative entropy"

Entropy Inequalities, Quantum Information and Quantum Physics 2021 "A chain rule for the quantum relative entropy" David Sutter - IBM Zürich Research Laboratory Abstract: The chain rule for the conditional entropy allows us to view the conditional entropy of a large composite system as a

From playlist Entropy Inequalities, Quantum Information and Quantum Physics 2021

Nexus Trimester - Benjamin Sach (University of Bristol)

Tight Cell-probe bounds for Online Hamming distance Benjamin Sach (University of Bristol February 26, 2016 Abstract: We give a tight cell-probe bound for the time to compute Hamming distance in a stream. The cell probe model is a particularly strong computational model and subsumes, for

From playlist Nexus Trimester - 2016 - Fundamental Inequalities and Lower Bounds Theme

Second Law of Thermodynamics,Entropy &Gibbs Free Energy

Here is a lecture to understand 2nd law of thermodynamics in a conceptual way. Along with 2nd law, concepts of entropy and Gibbs free energy are also explained. Check http://www.learnengineering.org/2012/12/understanding-second-law-of.html to get to know about industrial applications of se

From playlist Mechanical Engineering

Engineering MAE 91. Intro to Thermodynamics. Lecture 15.

UCI MAE 91: Introduction to Thermodynamics (Spring 2013). Lec 15. Intro to Thermodynamics -- Second Law for a Control Volume -- View the complete course: http://ocw.uci.edu/courses/mae_91_introduction_to_thermal_dynamics.html Instructor: Roger Rangel, Ph.D. License: Creative Commons CC-BY

From playlist Engineering MAE 91. Intro to Thermodynamics

Engineering MAE 91. Intro to Thermodynamics. Lecture 13.

UCI MAE 91: Introduction to Thermodynamics (Spring 2013). Lec 13. Intro to Thermodynamics -- Entropy -- View the complete course: http://ocw.uci.edu/courses/mae_91_introduction_to_thermal_dynamics.html Instructor: Roger Rangel, Ph.D. License: Creative Commons CC-BY-SA Terms of Use: http:/

From playlist Engineering MAE 91. Intro to Thermodynamics

Viviane Baladi: Transfer operators for Sinai billiards - lecture 3

We will discuss an approach to the statistical properties of two-dimensional dispersive billiards (mostly discrete-time) using transfer operators acting on anisotropic Banach spaces of distributions. The focus of this part will be our recent work with Mark Demers on the measure of maximal

From playlist Analysis and its Applications

Nexus Trimester - Raphael Clifford (University of Bristol) - 2

Lower bounds for streaming problems 3/3 Raphael Clifford (University of Bristol) February 24, 2016 Abstract: It has become possible in recent years to provide unconditional lower bounds on the time needed to perform a number of basic computational operations. I will discuss some of the m

From playlist Nexus Trimester - 2016 - Fundamental Inequalities and Lower Bounds Theme

Thermodynamics: Crash Course Physics #23

Have you ever heard of a Perpetual Motion Machine? More to the point, have you ever heard of why Perpetual Motion Machines are impossible? One of the reasons is because of the first law of thermodynamics! In this episode of Crash Course Physics, Shini talks to us about Thermodynamics and E

From playlist Back to School - Expanded

MIT RES.TLL-004 STEM Concept Videos View the complete course: http://ocw.mit.edu/RES-TLL-004F13 Instructor: John Lienhard This video begins with observations of spontaneous processes from daily life and then connects the idea of spontaneity to entropy. License: Creative Commons BY-NC-SA

From playlist MIT STEM Concept Videos

Turbulence: Arrow of time & equilibrium-nonequilibrium behaviour by Mahendra Verma

PROGRAM THERMALIZATION, MANY BODY LOCALIZATION AND HYDRODYNAMICS ORGANIZERS: Dmitry Abanin, Abhishek Dhar, François Huveneers, Takahiro Sagawa, Keiji Saito, Herbert Spohn and Hal Tasaki DATE : 11 November 2019 to 29 November 2019 VENUE: Ramanujan Lecture Hall, ICTS Bangalore How do is

From playlist Thermalization, Many Body Localization And Hydrodynamics 2019

Physics 32.5 Statistical Thermodynamics (15 of 39) Definition of Entropy of a Microstate

Visit http://ilectureonline.com for more math and science lectures! To donate: http://www.ilectureonline.com/donate https://www.patreon.com/user?u=3236071 In this video I will match entropy and thermodynamics probability in statistical thermodynamics. Next video in the polar coordinates