MATH2018 Lecture 2.4 Level Surfaces, Tangent Planes, and Normal Lines

We discuss how the gradient of a scalar field is related to the concept of a level surface, and show how we can use it to define the tangent plane and normal line at a point.

From playlist MATH2018 Engineering Mathematics 2D

Gradient (1 of 3: Developing the formula)

More resources available at www.misterwootube.com

From playlist Further Linear Relationships

Determining a Unit Normal Vector to a Surface

http://mathispower4u.wordpress.com/

From playlist Vectors

Show the Gradient to a Surface Using 3D Calc Plotter

New url for the 3D plotter: https://c3d.libretexts.org/CalcPlot3D/index.html This video using 3D Calc Plotter to illustrate the meaning of a gradient vector. http://mathispower4u.com

From playlist 3D Calc Plotter

11_7_1 Potential Function of a Vector Field Part 1

The gradient of a function is a vector. n-Dimensional space can be filled up with countless vectors as values as inserted into a gradient function. This is then referred to as a vector field. Some vector fields have potential functions. In this video we start to look at how to calculat

From playlist Advanced Calculus / Multivariable Calculus

What is Gradient, and Gradient Given Two Points

"Find the gradient of a line given two points."

From playlist Algebra: Straight Line Graphs

MATH2018 Lecture 2.3 Gradient and Directional Derivative

We introduce the concepts of the gradient and directional derivative, which tell us how a scalar field varies in space.

From playlist MATH2018 Engineering Mathematics 2D

This video explains what information the gradient provides about a given function. http://mathispower4u.wordpress.com/

From playlist Functions of Several Variables - Calculus

Lec 12: Gradient; directional derivative; tangent plane | MIT 18.02 Multivariable Calculus, Fall 07

Lecture 12: Gradient; directional derivative; tangent plane. View the complete course at: http://ocw.mit.edu/18-02SCF10 License: Creative Commons BY-NC-SA More information at http://ocw.mit.edu/terms More courses at http://ocw.mit.edu

From playlist MIT 18.02 Multivariable Calculus, Fall 2007

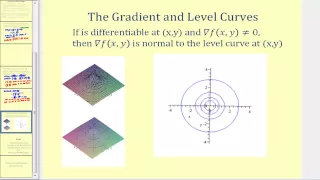

Worldwide Calculus: Level Sets & Gradient Values

Lecture on 'Level Sets & Gradient Values' from 'Worldwide Multivariable Calculus'. For more lecture videos and $10 digital textbooks, visit www.centerofmath.org.

From playlist Multivariable Derivatives

Lecture 17: Discrete Curvature II (Discrete Differential Geometry)

Full playlist: https://www.youtube.com/playlist?list=PL9_jI1bdZmz0hIrNCMQW1YmZysAiIYSSS For more information see http://geometry.cs.cmu.edu/ddg

From playlist Discrete Differential Geometry - CMU 15-458/858

Lecture 8A : A brief overview of "Hessian Free" optimization

Neural Networks for Machine Learning by Geoffrey Hinton [Coursera 2013] Lecture 8A : A brief overview of "Hessian Free" optimization

From playlist Neural Networks for Machine Learning by Professor Geoffrey Hinton [Complete]

Lecture 8.1 — A brief overview of Hessian-free optimization [Neural Networks for Machine Learning]

Lecture from the course Neural Networks for Machine Learning, as taught by Geoffrey Hinton (University of Toronto) on Coursera in 2012. Link to the course (login required): https://class.coursera.org/neuralnets-2012-001

From playlist [Coursera] Neural Networks for Machine Learning — Geoffrey Hinton

Lecture 6/16 : Optimization: How to make the learning go faster

Neural Networks for Machine Learning by Geoffrey Hinton [Coursera 2013] 6A Overview of mini-batch gradient descent 6B A bag of tricks for mini-batch gradient descent 6C The momentum method 6D A separate, adaptive learning rate for each connection 6E rmsprop: Divide the gradient by a runni

From playlist Neural Networks for Machine Learning by Professor Geoffrey Hinton [Complete]

In visual computing, point locations are often optimized using a "repulsive" energy, to obtain a nice uniform distribution for tasks ranging from image stippling to mesh generation to fluid simulation. But how do you perform this same kind of repulsive optimization on curves and surfaces?

From playlist Repulsive Videos