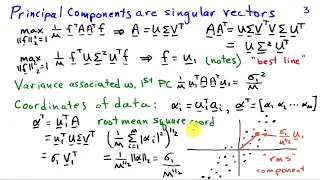

http://AllSignalProcessing.com for more great signal processing content, including concept/screenshot files, quizzes, MATLAB and data files. Representing multivariate random signals using principal components. Principal component analysis identifies the basis vectors that describe the la

From playlist Random Signal Characterization

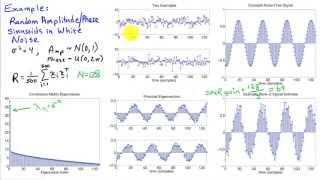

Robust Principal Component Analysis (RPCA)

Robust statistics is essential for handling data with corruption or missing entries. This robust variant of principal component analysis (PCA) is now a workhorse algorithm in several fields, including fluid mechanics, the Netflix prize, and image processing. Book Website: http://databoo

From playlist Data-Driven Science and Engineering

Robust Modal Decompositions for Fluid Flows

This research abstract by Isabel Scherl describes how to use robust principle component analysis (RPCA) for robust modal decompositions of fluid flows that have large outliers or corruption. https://doi.org/10.1103/PhysRevFluids.5.054401 https://arxiv.org/abs/1905.07062 Robust principa

From playlist Research Abstracts from Brunton Lab

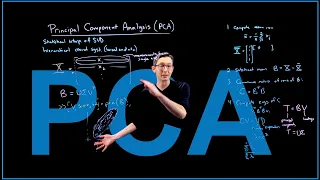

Principal Component Analysis (PCA)

Principal component analysis (PCA) is a workhorse algorithm in statistics, where dominant correlation patterns are extracted from high-dimensional data. Book PDF: http://databookuw.com/databook.pdf Book Website: http://databookuw.com These lectures follow Chapter 1 from: "Data-Driven S

From playlist Data-Driven Science and Engineering

19 Data Analytics: Principal Component Analysis

Lecture on unsupervised machine learning with principal component analysis for dimensional reduction, inference and prediction.

From playlist Data Analytics and Geostatistics

StatQuest: Principal Component Analysis (PCA), Step-by-Step

Principal Component Analysis, is one of the most useful data analysis and machine learning methods out there. It can be used to identify patterns in highly complex datasets and it can tell you what variables in your data are the most important. Lastly, it can tell you how accurate your new

From playlist StatQuest

Davy Paindaveine - Testing for principal component directions under weak identifiability

Professor Davy Paindaveine (Université Libre de Bruxelles) presents “Testing for principal component directions under weak identifiability", 2 October 2020.

From playlist Statistics Across Campuses

Applied Machine Learning 2019 - Lecture 16 - NMF; Outlier detection

Non-negative Matrix factorization for feature extraction Outlier detection with probabilistic models Isolation forests One-class SVMs Materials and slides on the class website: https://www.cs.columbia.edu/~amueller/comsw4995s19/schedule/

From playlist Applied Machine Learning - Spring 2019

Universal Biology in Adaptation and Evolution: Multilevel Consistency, by Kunihiko Kaneko

PROGRAM STATISTICAL BIOLOGICAL PHYSICS: FROM SINGLE MOLECULE TO CELL (ONLINE) ORGANIZERS: Debashish Chowdhury (IIT Kanpur), Ambarish Kunwar (IIT Bombay) and Prabal K Maiti (IISc, Bengaluru) DATE: 07 December 2020 to 18 December 2020 VENUE:Online 'Fluctuation-and-noise' are themes

From playlist Statistical Biological Physics: From Single Molecule to Cell (Online)

Matrix Completion and the Netflix Prize

This video describes how the singular value decomposition (SVD) can be used for matrix completion and recommender systems. These lectures follow Chapter 1 from: "Data-Driven Science and Engineering: Machine Learning, Dynamical Systems, and Control" by Brunton and Kutz Amazon: https://w

From playlist Data-Driven Science and Engineering

Machine Learning for Fluid Mechanics

@eigensteve on Twitter This video gives an overview of how Machine Learning is being used in Fluid Mechanics. In fact, fluid mechanics is one of the original "big data" sciences, and many advances in ML came out of fluids. Read the paper: https://www.annualreviews.org/doi/abs/10.1146/an

From playlist Data-Driven Science and Engineering

Principal Component Analysis (The Math) : Data Science Concepts

Let's explore the math behind principal component analysis! --- Like, Subscribe, and Hit that Bell to get all the latest videos from ritvikmath ~ --- Check out my Medium: https://medium.com/@ritvikmathematics

From playlist Data Science Concepts

Mean field asymptotics in high-dimensional statistics – A. Montanari – ICM2018

Probability and Statistics Invited Lecture 12.16 Mean field asymptotics in high-dimensional statistics: From exact results to efficient algorithms Andrea Montanari Abstract: Modern data analysis challenges require building complex statistical models with massive numbers of parameters. It

From playlist Probability and Statistics

This video is brought to you by the Quantitative Analysis Institute at Wellesley College. The material is best viewed as part of the online resources that organize the content and include questions for checking understanding: https://www.wellesley.edu/qai/onlineresources

From playlist Applied Data Analysis and Statistical Inference

GED for spatial filtering and dimensionality reduction

Generalized eigendecomposition is a powerful method of spatial filtering in order to extract components from the data. You'll learn the theory, motivations, and see a few examples. Also discussed is the dangers of overfitting noise and few ways to avoid it. The video uses files you can do

From playlist OLD ANTS #9) Matrix analysis