(ML 19.2) Existence of Gaussian processes

Statement of the theorem on existence of Gaussian processes, and an explanation of what it is saying.

From playlist Machine Learning

Powered by https://www.numerise.com/ Independent events

From playlist Multiple event probability

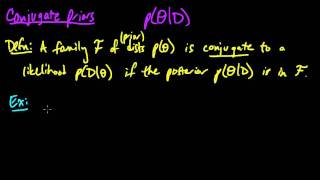

Definition of conjugate priors, and a couple of examples. For more detailed examples, see the videos on the Beta-Bernoulli model, the Dirichlet-Categorical model, and the posterior distribution of a univariate Gaussian.

From playlist Machine Learning

Probability of Successive Independent Events

"Successive independent events (including tree diagrams)."

From playlist Data Handling: Probability

Determine the probability of two events that are mutually exclusive

👉 Learn how to find the probability of mutually exclusive events. Two events are said to be mutually exclusive when the two events cannot occur at the same time. For instance, when you throw a coin the event that a head appears and the event that a tail appears are mutually exclusive becau

From playlist Probability of Mutually Exclusive Events

Introduction to Linearly Independent and Linearly Dependent Sets of Vectors

This video introduced the topic of linearly independent and dependent sets of vectors.

From playlist Linear Independence and Bases

(PP 6.2) Multivariate Gaussian - examples and independence

Degenerate multivariate Gaussians. Some sketches of examples and non-examples of Gaussians. The components of a Gaussian are independent if and only if they are uncorrelated.

From playlist Probability Theory

Using Variables in Science – The Foundations of Statistical Analysis and Scientific Testing (1-5)

Continuing our discussion about variables, you will learn how variables are used in science. Specifically, when we do statistics, we need independent and dependent variables. Independent variables are often categorical (groups) and dependent variables are typically measured on a scale. You

From playlist WK1 Numbers and Variables - Online Statistics for the Flipped Classroom

Nexus Trimester - Kamalika Chaudhuri (UC San Diego)

Privacy-preserving Analysis of Correlated data Kamalika Chaudhuri (UC San Diego) March 30, 2016 Abstract: Many modern machine learning applications involve private and sensitive data that are highly correlated. Examples are mining of time series of physical activity measurements, or minin

From playlist Nexus Trimester - 2016 - Secrecy and Privacy Theme

Jana de Wiljes: Sequential Bayesian Learning

Abstract: In various application areas it is crucial to make predictions or decisions based on sequentially incoming observations and previous existing knowledge on the system of interest. The prior knowledge is often given in the form of evolution equations (e.g., ODEs derived via first p

From playlist SMRI Seminars

Yoshua Bengio | From System 1 Deep Learning to System 2 Deep Learning | NeurIPS 2019

Slides: http://www.iro.umontreal.ca/~bengioy/NeurIPS-11dec2019.pdf Summary: Past progress in deep learning has concentrated mostly on learning from a static dataset, mostly for perception tasks and other System 1 tasks which are done intuitively and unconsciously by humans. However, in

From playlist AI talks

Priors for Semantic Variables - Yoshua Bengio

Seminar on Theoretical Machine Learning Topic: Priors for Semantic Variables Speaker: Yoshua Bengio Affiliation: Université de Montréal Date: July 23, 2020 For more video please visit http://video.ias.edu

From playlist Mathematics

Yoshua Bengio: From System 1 Deep Learning to System 2 Deep Learning (NeurIPS 2019)

This is a combined slide/speaker video of Yoshua Bengio's talk at NeurIPS 2019. Slide-synced non-YouTube version is here: https://slideslive.com/neurips/neurips-2019-west-exhibition-hall-c-b3-live This is a clip on the Lex Clips channel that I mostly use to post video clips from the Artif

From playlist AI talks

Intractability in Algorithmic Game Theory - Tim Roughgarden

Tim Roughgarden Stanford University March 11, 2013 We discuss three areas of algorithmic game theory that have grappled with intractability. The first is the complexity of computing game-theoretic equilibria, like Nash equilibria. There is an urgent need for new ideas on this topic, to ena

From playlist Mathematics

Statistical Rethinking 2022 Lecture 03 - Geocentric Models

Linear regression from a Bayesian perspective Slides and course materials: https://github.com/rmcelreath/stat_rethinking_2022 Music Intro: https://www.youtube.com/watch?v=4y33h81phKU Flow: https://www.youtube.com/watch?v=ip4n8zaTg1w Pause: https://www.youtube.com/watch?v=1f-NQAgm-YM Cha

From playlist Statistical Rethinking 2022

Statistical Rethinking Winter 2019 Lecture 02

Lecture 02 of the Dec 2018 through March 2019 edition of Statistical Rethinking: A Bayesian Course with R and Stan. This lectures covers the material in Chapters 2 and 3 of the book.

From playlist Statistical Rethinking Winter 2019

From Deep Learning of Disentangled Representations to Higher-level Cognition

One of the main challenges for AI remains unsupervised learning, at which humans are much better than machines, and which we link to another challenge: bringing deep learning to higher-level cognition. We review earlier work on the notion of learning disentangled representations and deep g

From playlist AI talks

Occam's Razor and Statistical Mechanics - Singular Learning Theory Seminar 38

Edmund Lau presents Balasubramanian's paper "Statistical Inference, Occam’s Razor, and Statistical Mechanics on the Space of Probability Distributions". This involves - The principle of Bayesian model selection - Local behaviour of KL divergence / infinitesimal metric / Fisher information

From playlist Singular Learning Theory

Statistical physics and statistical inference (Lecture 2) by Marc Mézard

INFOSYS-ICTS TURING LECTURES ARTIFICIAL INTELLIGENCE: SUCCESS, LIMITS, MYTHS AND THREATS SPEAKER: Marc Mézard (Director of Ecole normale supérieure - PSL University ) DATE: 06 January 2020, 16:00 to 17:30 VENUE: Chandrasekhar Auditorium, ICTS-TIFR, Bengaluru Lecture 1 (Public Lecture)

From playlist Infosys-ICTS Turing Lectures

How to determine if two events are mutually exclusive or not

👉 Learn how to find the probability of mutually exclusive events. Two events are said to be mutually exclusive when the two events cannot occur at the same time. For instance, when you throw a coin the event that a head appears and the event that a tail appears are mutually exclusive becau

From playlist Probability of Mutually Exclusive Events