We derive the formula for the gradient of mean squared error using an L2 penalty (regularized linear regression).

From playlist Regularization

Regularization Part 3: Elastic Net Regression

Elastic-Net Regression is combines Lasso Regression with Ridge Regression to give you the best of both worlds. It works well when there are lots of useless variables that need to be removed from the equation and it works well when there are lots of useful variables that need to be retained

From playlist StatQuest

Characterizations of Diagonalizability In this video, I define the notion of diagonalizability and show what it has to do with eigenvectors. Check out my Diagonalization playlist: https://www.youtube.com/playlist?list=PLJb1qAQIrmmCSovHY6cXzPMNSuWOwd9wB Subscribe to my channel: https://

From playlist Diagonalization

Ridge, Lasso and Elastic-Net Regression in R

The code in this video can be found on the StatQuest GitHub: https://github.com/StatQuest/ridge_lasso_elastic_net_demo/blob/master/ridge_lass_elastic_net_demo.R This video is assumes you already know about Ridge, Lasso and Elastic-Net Regression, if not, here are the links to the Quests..

From playlist Statistics and Machine Learning in R

Predicting and Understanding Human Choices using PCMC-Net with an application to Airline Itineraries

Speaker(s): Alix Lheritier Facilitator(s): Omar Nada Find the recording, slides, and more info at https://ai.science/e/predicting-and-understanding-human-choices-using-pcmc-net-with-an-application-to-airline-itineraries--T7VHeDI6OAv0cXM7HWYT Motivation / Abstract The work focuses on pred

From playlist Recommender Systems

Machine Learning Lecture 17 "Regularization / Review" -Cornell CS4780 SP17

Lecture Notes: http://www.cs.cornell.edu/courses/cs4780/2018fa/lectures/lecturenote10.html

From playlist CORNELL CS4780 "Machine Learning for Intelligent Systems"

Deep Learning Lecture 2.5 - Regularization

Deep Learning Lecture - Estimator Theory 4 - L2 / Ridge regularization - Sparsity-Inducing regularization - Practical workflow for ML training and validation

From playlist Deep Learning Lecture

Lecture 11: Demand for Health (Part 1)

MIT 14.771 Development Economics, Fall 2021 Instructor: Esther Duflo View the complete course: https://ocw.mit.edu/courses/14-771-development-economics-fall-2021 YouTube Playlist: https://www.youtube.com/playlist?list=PLUl4u3cNGP61kvh3caDts2R6LmkYbmzaG Discusses issues with the demand

From playlist MIT 14.771 Development Economics, Fall 2021

A lesson on regularization with some applications in python

From playlist Regularization

Data Science - Part XII - Ridge Regression, LASSO, and Elastic Nets

For downloadable versions of these lectures, please go to the following link: http://www.slideshare.net/DerekKane/presentations https://github.com/DerekKane/YouTube-Tutorials This lecture provides an overview of some modern regression techniques including a discussion of the bias varianc

From playlist Data Science

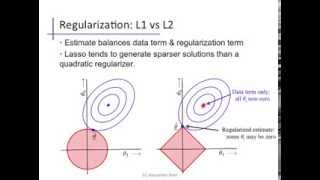

Linear regression (6): Regularization

Lp regularization penalties; comparing L2 vs L1

From playlist cs273a

In this video, we learn about regularization: an automatic way to adjust the size of the hypothesis set. Link to my notes on Introduction to Data Science: https://github.com/knathanieltucker/data-science-foundations Try answering these comprehension questions to further grill in the conc

From playlist Introduction to Data Science - Foundations

Supervised Learning Estimators in Scikit Learn

We really dive in today. We explore the scikit learn base estimator for supervised learning and play around with construction using hyperparameters, fitting the model (with and without cross validation), predicting (with regressors and classifiers) and scoring the model. Associated Github

From playlist A Bit of Data Science and Scikit Learn

Playing with Platonic and Archimedean Solids by Swati Sircar and Susy Varughese

SUMMER SCHOOL FOR WOMEN IN MATHEMATICS AND STATISTICS POPULAR TALKS (TITLE AND ABSTRACT) June 17, Friday, 15:45 - 16:45 hrs Swati Sircar (AzimPremji University, Bengaluru, India) Title: Playing with Platonic and Archimedean Solids Abstract: While the 5 Platonic solids are quite popular

From playlist Summer School for Women in Mathematics and Statistics - 2022

Using structure to select features in high dimension. - Azencott - Workshop 3 - CEB T1 2019

Chloé-Agathe Azencott (Mines-Paristech) / 02.04.2019 Using structure to select features in high dimension. Many problems in genomics require the ability to identify relevant features in data sets containing many more orders of magnitude than samples. This setup poses different statistic

From playlist 2019 - T1 - The Mathematics of Imaging

Lecture 0605 Regularization and bias/variance

Machine Learning by Andrew Ng [Coursera] 06-01 Advice for applying machine learning

From playlist Machine Learning by Professor Andrew Ng