Proof that the Kernel of a Linear Transformation is a Subspace

Please Subscribe here, thank you!!! https://goo.gl/JQ8Nys Proof that the Kernel of a Linear Transformation is a Subspace

From playlist Proofs

Determine the Kernel of a Linear Transformation Given a Matrix (R3, x to 0)

This video explains how to determine the kernel of a linear transformation.

From playlist Kernel and Image of Linear Transformation

Introduction to the Kernel and Image of a Linear Transformation

This video introduced the topics of kernel and image of a linear transformation.

From playlist Kernel and Image of Linear Transformation

Concept Check: Describe the Kernel of a Linear Transformation (Projection onto y=x)

This video explains how to describe the kernel of a linear transformation that is a projection onto the line y = x.

From playlist Kernel and Image of Linear Transformation

Determine a Basis for the Kernel of a Matrix Transformation (3 by 4)

This video explains how to determine a basis for the kernel of a matrix transformation.

From playlist Kernel and Image of Linear Transformation

Kernel of a group homomorphism

In this video I introduce the definition of a kernel of a group homomorphism. It is simply the set of all elements in a group that map to the identity element in a second group under the homomorphism. The video also contain the proofs to show that the kernel is a normal subgroup.

From playlist Abstract algebra

Generalized Conway Game of Life - SmoothLife3

The transfer function that is used to calculate every new timestep can be arbitrarily complex. The kernel does not change, just as in different variations of the Game of Life, where birth/death values change, but not the kernel.

From playlist SmoothLife

Some Theoretical Results on Model-Based Reinforcement Learning by Mengdi Wang

Program Advances in Applied Probability II (ONLINE) ORGANIZERS: Vivek S Borkar (IIT Bombay, India), Sandeep Juneja (TIFR Mumbai, India), Kavita Ramanan (Brown University, Rhode Island), Devavrat Shah (MIT, US) and Piyush Srivastava (TIFR Mumbai, India) DATE & TIME 04 January 2021 to

From playlist Advances in Applied Probability II (Online)

Find the Kernel of a Matrix Transformation (Give Direction Vector)

This video explains how to determine direction vector a line that represents for the kernel of a matrix transformation

From playlist Kernel and Image of Linear Transformation

From playlist Contributed talks One World Symposium 2020

From playlist Contributed talks One World Symposium 2020

Select Which Vectors are in the Kernel of a Matrix (2 by 3)

This video explains how to determine which vectors for a list are in the kernel of a matrix.

From playlist Kernel and Image of Linear Transformation

Unsupervised state embedding and aggregation towards scalable reinforcement learning - Mengdi Wang

Workshop on New Directions in Reinforcement Learning and Control Topic: Unsupervised state embedding and aggregation towards scalable reinforcement learning Speaker: Mengdi Wang Affiliation: Princeton University Date: November 7, 2019 For more video please visit http://video.ias.edu

From playlist Mathematics

Kernel and Rich Regimes in Deep Learning - Nati Srebro

Workshop on Theory of Deep Learning: Where next? Topic: Kernel and Rich Regimes in Deep Learning Speaker: Nati Srebro Affiliation: TTIC Date: October 17, 2019 For more video please visit http://video.ias.edu

From playlist Mathematics

Band-pass filtering and the filter-Hilbert method

The Hilbert transform produces uninterpretable results on broadband data. You will need to narrow-band filter the signal first. This video shows one method of computing an FIR filter and applying it to EEG data. Together with the Hilbert transform, this gives us the filter-Hilbert method.

From playlist OLD ANTS #4) Time-frequency analysis via other methods

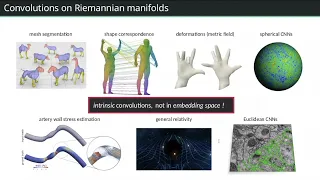

Lecture 5: Equivariant CNNs II (Riemannian manifolds) - Maurice Weiler

Video recording of the First Italian School on Geometric Deep Learning held in Pescara in July 2022. Slides: https://www.sci.unich.it/geodeep2022/slides/CoordinateIndependentCNNs.pdf

From playlist First Italian School on Geometric Deep Learning - Pescara 2022

Alexander Bufetov: Determinantal point processes - Lecture 3

Abstract: Determinantal point processes arise in a wide range of problems in asymptotic combinatorics, representation theory and mathematical physics, especially the theory of random matrices. While our understanding of determinantal point processes has greatly advanced in the last 20 year

From playlist Probability and Statistics

Stanford Seminar - KUtrace 2020

Dick Sites February 5, 2020 Observation tools for understanding occasionally-slow performance in large-scale distributed transaction systems are not keeping up with the complexity of the environment. The same applies to large database systems, to real-time control systems in cars and airp

From playlist Stanford EE380-Colloquium on Computer Systems - Seminar Series

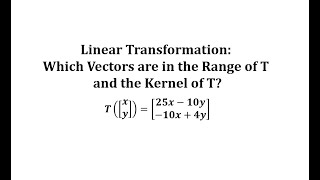

Linear Transformation: Which Vectors are in the Range of T and the Kernel of T?

This video explains how to determine if a given vector in the range / image and the kernel of linear transformation.

From playlist Kernel and Image of Linear Transformation