In this very easy and short tutorial I explain the concept of the transpose of matrices, where we exchange rows for columns. The matrices have some properties that you should be aware of. These include how to the the transpose of the product of matrices and in the transpose of the invers

From playlist Introducing linear algebra

Transcendental Functions 3 Examples using Properties of Logarithms.mov

Examples using the properties of logarithms.

From playlist Transcendental Functions

Transcendental Functions 18 More Examples 1.mov

More example problems.

From playlist Transcendental Functions

Transcendental Functions 18 More Examples 2.mov

More example problems.

From playlist Transcendental Functions

Transcendental Functions 16 Proof of the Properties of Logarithms Part 1.mov

Proof of some of the properties of logarithms.

From playlist Transcendental Functions

What is the transpose of a matrix?

What is the transpose of a matrix? Here it is defined and some simple examples are discussed. Free ebook http://tinyurl.com/EngMathYT

From playlist Intro to Matrices

Transcendental Functions 14 Derivative of Natural Log of x Example 3.mov

More examples to work through.

From playlist Transcendental Functions

Transcendental Functions 2 Properties of Logarithms.mov

Properties of Logarithms.

From playlist Transcendental Functions

Transcendental Functions 1 Introduction.mov

Transcendental Functions in Calculus.

From playlist Transcendental Functions

Nikolaus Kriegskorte - Controversial stimuli: experiments to adjudicate computational hypotheses

Recorded 13 January 2023. Nikolaus Kriegeskorte of Columbia University presents "Controversial stimuli: Optimizing experiments to adjudicate among computational hypotheses" at IPAM's Explainable AI for the Sciences: Towards Novel Insights Workshop. Learn more online at: http://www.ipam.ucl

From playlist 2023 Explainable AI for the Sciences: Towards Novel Insights

Using & Expanding the NLP Models Hub 1 | Webinar

Spark NLP and Spark OCR Free Trials are available here: https://www.johnsnowlabs.com/spark-nlp-try-free/ The NLP Models Hub which powers the Spark NLP and NLU libraries takes a different approach than the hubs of other libraries like TensorFlow, PyTorch, and Hugging Face. While it also pr

From playlist AI & NLP Webinars

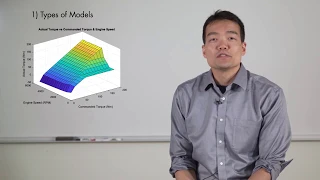

Learn about HEV modeling and simulation. In this video, you will: - Learn about different methods for creating HEV component models. - See how Powertrain Blockset™ and Simscape™ tools can be used for HEV modeling. - Learn best practices for getting started and creating new plant models.

From playlist Hybrid Electric Vehicles

DDPS | Learning hierarchies of reduced-dimension and context-aware models for Monte Carlo sampling

In this DDPS Seminar Series talk from Sept. 2, 2021, University of Texas at Austin postdoctoral fellow Ionut-Gabriel Farcas discusses hierarchies of reduced-dimension and context-aware low-fidelity models for multi-fidelity Monte Carlo sampling. Description: In traditional model reduction

From playlist Data-driven Physical Simulations (DDPS) Seminar Series

This is Lecture 14 of the CSE519 (Data Science) course taught by Professor Steven Skiena [http://www.cs.stonybrook.edu/~skiena/] at Stony Brook University in 2016. The lecture slides are available at: http://www.cs.stonybrook.edu/~skiena/519 More information may be found here: http://www

From playlist CSE519 - Data Science Fall 2016

Stanford CS330: Deep Multi-task & Meta Learning I 2021 I Lecture 15

For more information about Stanford's Artificial Intelligence professional and graduate programs visit: https://stanford.io/ai To follow along with the course, visit: http://cs330.stanford.edu/fall2021/index.html To view all online courses and programs offered by Stanford, visit: http:/

From playlist Stanford CS330: Deep Multi-Task & Meta Learning I Autumn 2021I Professor Chelsea Finn

DeepMind x UCL | Deep Learning Lectures | 11/12 | Modern Latent Variable Models

This lecture, by DeepMind Research Scientist Andriy Mnih, explores latent variable models, a powerful and flexible framework for generative modelling. After introducing this framework along with the concept of inference, which is central to it, Andriy focuses on two types of modern latent

From playlist Learning resources

Introduction to System Modeling

This video gives an overview of system modeling and how to make use of the simulation engine and built-in models that are integrated in Wolfram Language 11.3. The Wolfram SystemModeler Model Center is shown along with how to seamlessly move back and forth between it and a Wolfram Language

From playlist Introduction to Model Analytics with SystemModeler and the Wolfram Language

Stanford Seminar - Persistent and Unforgeable Watermarks for DeepNeural Networks

Huiying Li University of Chicago Emily Wegner University of Chicago October 30, 2019 As deep learning classifiers continue to mature, model providers with sufficient data and computation resources are exploring approaches to monetize the development of increasingly powerful models. Licen

From playlist Stanford EE380-Colloquium on Computer Systems - Seminar Series

This video defines the transpose of a matrix and explains how to transpose a matrix. The properties of transposed matrices are also discussed. Site: mathispower4u.com Blog: mathispower4u.wordpress.com

From playlist Introduction to Matrices and Matrix Operations

Chapter 4 live sessions with Omar

This is a recording of the twitch session on July 7th 2021. Chapter 4 of the course: https://huggingface.co/course/chapter4 Have a question? Checkout the forums: https://discuss.huggingface.co/c/course/20 Subscribe to our newsletter: https://huggingface.curated.co/

From playlist Hugging Face Course: Live Sessions Recordings