Running Time of Connected Component - Intro to Algorithms

This video is part of an online course, Intro to Algorithms. Check out the course here: https://www.udacity.com/course/cs215.

From playlist Introduction to Algorithms

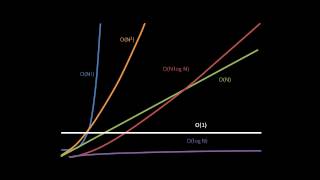

Searching and Sorting Algorithms (part 4 of 4)

Introductory coverage of basic searching and sorting algorithms, as well as a rudimentary overview of Big-O algorithm analysis. Part of a larger series teaching programming at http://codeschool.org

From playlist Searching and Sorting Algorithms

Exponential Running Time - Intro to Algorithms

This video is part of an online course, Intro to Algorithms. Check out the course here: https://www.udacity.com/course/cs215.

From playlist Introduction to Algorithms

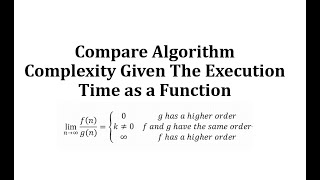

Compare Algorithm Complexity Given The Execution Time as a Function

This video explains how to use a limit at infinity to compare the complexity (growth rate) of two functions. http://mathispower4u.com

From playlist Additional Topics: Generating Functions and Intro to Number Theory (Discrete Math)

Function Comparision - Intro to Algorithms

This video is part of an online course, Intro to Algorithms. Check out the course here: https://www.udacity.com/course/cs215.

From playlist Introduction to Algorithms

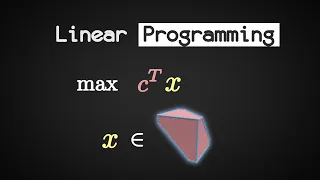

What is Linear Programming (LP)? (in 2 minutes)

Overview of Linear Programming in 2 minutes. ---------------------- Additional Information on the distinction between "Polynomial" vs "Strongly Polynomial" algorithms: An algorithm for solving LPs of the form max c^t x s.t. Ax \le b runs in polynomial time if its running time can be boun

From playlist Under X-Minutes Visual Explanations

Divisible By Five - Intro to Algorithms

This video is part of an online course, Intro to Algorithms. Check out the course here: https://www.udacity.com/course/cs215.

From playlist Introduction to Algorithms

Heap Sort Performance - Intro to Algorithms

This video is part of an online course, Intro to Algorithms. Check out the course here: https://www.udacity.com/course/cs215.

From playlist Introduction to Algorithms

Genomic Analysis at Scale: Mapping Irregular Computations to Advanced Architectures

Abstract: Genomic data sets are growing dramatically as the cost of sequencing continues to decline and community databases are built to store and share this data with the research community. Some of data analysis problems require large scale parallel platforms to meet both the memory and

From playlist SIAG-ACDA Online Seminar Series

D2I - Matt Whithead discusses machine learning models in his Student Seminar

Ensemble machine learning models are often highly accurate on the supervised learning problem of classification. Combining groups of independent models allows for individual specialization and diversification with limited over fitting. The main drawback of using ensembles is the greatly in

From playlist Data to Insight Center (D2I)

From playlist Machine Learning Streams

M. Zadimoghaddam: Randomized Composable Core-sets for Submodular Maximization

Morteza Zadimoghaddam: Randomized Composable Core-sets for Distributed Submodular and Diversity Maximization An effective technique for solving optimization problems over massive data sets is to partition the data into smaller pieces, solve the problem on each piece and compute a represen

From playlist HIM Lectures 2015

New Architectures for a New Biology

October 11, 2006 lecture by David E. Shaw for the Stanford University Computer Systems Colloquium (EE 380). This talk describes the current state of the art in biomolecular simulation and explore the potential role of high-performance computing technologies in extending current capabili

From playlist Course | Computer Systems Laboratory Colloquium (2006-2007)

Zero Knowledge Proofs - Seminar 4 - From interactive to non-interactive

This seminar series is about the mathematical foundations of cryptography. In this series Eleanor McMurtry is explaining Zero Knowledge Proofs (ZKPs). This seminar explains how to construct *non-interactive* ZKPs which are much more practical than the schemes discussed so far in the semina

From playlist Metauni

Michal Pilipczuk: Introduction to parameterized algorithms, lecture I

The mini-course will provide a gentle introduction to the area of parameterized complexity, with a particular focus on methods connected to (integer) linear programming. We will start with basic techniques for the design of parameterized algorithms, such as branching, color coding, kerneli

From playlist Summer School on modern directions in discrete optimization

Stanford Seminar - How to Compute with Schrödinger's Cat: An Introduction to Quantum Computing

"How to Compute with Schrödinger's Cat: An Introduction to Quantum Computing" - Eleanor Rieffel of NASA Ames Research & Wolfgang Polak, Independent Consultant About the talk: The success of the abstract model of classical computation in terms of bits, logical operations, algorithms, and

From playlist Engineering

Michael Elad: "Sparse Modeling in Image Processing and Deep Learning"

New Deep Learning Techniques 2018 "Sparse Modeling in Image Processing and Deep Learning" Michael Elad, Technion - Israel Institute of Technology, Computer Science Abstract: Sparse approximation is a well-established theory, with a profound impact on the fields of signal and image proces

From playlist New Deep Learning Techniques 2018

Chandra Chekuri: On element connectivity preserving graph simplification

Chandra Chekuri: On element-connectivity preserving graph simplification The notion of element-connectivity has found several important applications in network design and routing problems. We focus on a reduction step that preserves the element-connectivity due to Hind and Oellerman which

From playlist HIM Lectures 2015