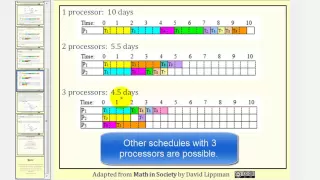

Scheduling: The List Processing Algorithm Part 1

This lesson explains and provides an example of the list processing algorithm to make a schedule given a priority list. Site: http://mathispower4u.com

From playlist Scheduling

From playlist Parallel Programming Concepts by Dr. Peter Troger

Jannik Matuschke: Generalized Malleable Scheduling via Discrete Convexity

In malleable scheduling, jobs can b e executed simultaneously on multiple machines with the prcessing time depending on the numb er of allocated machines. Each job is required to be executed non-preemptively and in unison, i.e., it has to occupy the same time interval on all its allocated

From playlist Workshop: Approximation and Relaxation

How to use Parallel Computing in MATLAB

Parallel Computing Toobox™ lets you solve computationally and data-intensive problems using multicore processors, GPUs, clusters, and clouds. Parallel computing is ideal for problems such as parameter sweeps, optimizations, and Monte Carlo simulations. Perform parallel computing concepts u

From playlist “How To” with MATLAB and Simulink

This lesson introduces the topic of scheduling and define basic scheduling vocabulary. Site: http://mathispower4u.com

From playlist Scheduling

François Broquedis: A gentle introduction to parallel programming using OpenMP

Recording during the "CEMRACS Summer school 2016: Numerical challenges in parallel scientific computing" the July 20, 2016 at the Centre International de Rencontres Mathématiques (Marseille, France) Filmmaker: Guillaume Hennenfent Find this video and other talks given by worldwide mathem

From playlist Numerical Analysis and Scientific Computing

MIT 6.172 Performance Engineering of Software Systems, Fall 2018 Instructor: Julian Shun View the complete course: https://ocw.mit.edu/6-172F18 YouTube Playlist: https://www.youtube.com/playlist?list=PLUl4u3cNGP63VIBQVWguXxZZi0566y7Wf This lecture covers modern multi-core processors, the

From playlist MIT 6.172 Performance Engineering of Software Systems, Fall 2018

Data Science with Mathematica -- Parallelism

In this video of the Data Science with Mathematica track I demonstrate several features of the parallelism framework of the Mathematica system. I start with basic theory on parallelism itself and then show it can be used very efficiently in the Mathematica system. The playlist for the Da

From playlist Data Science with Mathematica

#381 How to work with a Real Time Operating System and is it any good? (FreeRTOS, ESP32)

Using a real operating system to simplify programming with the Arduino IDE. Is this possible and how? Let’s have a closer look! Operating systems were invented to simplify our lives. But, because they need a lot of resources, they only run on reasonable computers like the Raspberry Pi or a

From playlist ESP32

Live CEOing Ep 350: RemoteSubmit in Wolfram Language

In this episode of Live CEOing, Stephen Wolfram discusses the design of RemoteSubmit in the Wolfram Language. If you'd like to contribute to the discussion in future episodes, you can participate through this YouTube channel or through the official Twitch channel of Stephen Wolfram here: h

From playlist Behind the Scenes in Real-Life Software Design

2.6.5 Time versus Processors: Video

MIT 6.042J Mathematics for Computer Science, Spring 2015 View the complete course: http://ocw.mit.edu/6-042JS15 Instructor: Albert R. Meyer License: Creative Commons BY-NC-SA More information at http://ocw.mit.edu/terms More courses at http://ocw.mit.edu

From playlist MIT 6.042J Mathematics for Computer Science, Spring 2015

MIT 6.172 Performance Engineering of Software Systems, Fall 2018 Instructor: Julian Shun View the complete course: https://ocw.mit.edu/6-172F18 YouTube Playlist: https://www.youtube.com/playlist?list=PLUl4u3cNGP63VIBQVWguXxZZi0566y7Wf Professor Shun discusses races and parallelism, how ci

From playlist MIT 6.172 Performance Engineering of Software Systems, Fall 2018

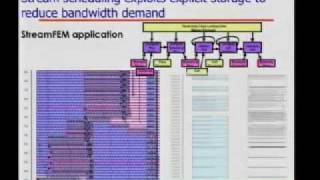

November 1, 2006 lecture by William Dally for the Stanford University Computer Systems Colloquium (EE 380). A discussion about the exploration of parallelism and locality with examples drawn from the Imagine and Merrimac projects and from three generations of stream programming systems.

From playlist Course | Computer Systems Laboratory Colloquium (2006-2007)

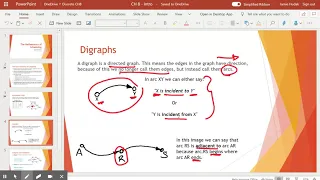

Into to the Mathematics of Scheduling

Terminology explained includes preference schedule, digraphs, tasks, arcs, processors, and timelines.

From playlist Discrete Math