Hierarchical hidden Markov model

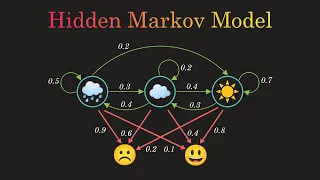

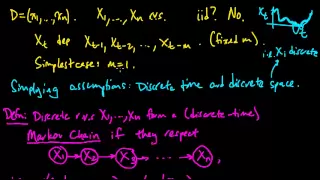

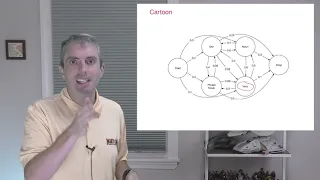

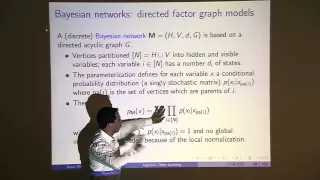

The hierarchical hidden Markov model (HHMM) is a statistical model derived from the hidden Markov model (HMM). In an HHMM, each state is considered to be a self-contained probabilistic model. More precisely, each state of the HHMM is itself an HHMM. HHMMs and HMMs are useful in many fields, including pattern recognition. (Wikipedia).