Generalized Aitken-Steffensen Method

Generalized Aitken's delta-squared method and Generalized Steffensen's Method applying Fixed Point Iteration to Systems of Nonlinear Equations. Video goes step-by-step to derive Generalized Aitken-Steffensen and discusses induced and accelerated convergence behavior as well as quadratic or

From playlist Solving Systems of Nonlinear Equations

11_3_1 The Gradient of a Multivariable Function

Using the partial derivatives of a multivariable function to construct its gradient vector.

From playlist Advanced Calculus / Multivariable Calculus

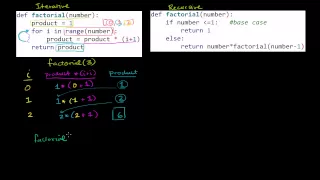

Comparing Iterative and Recursive Factorial Functions

Comparing iterative and recursive factorial functions

From playlist Computer Science

Nikhil Bansal: On a generalization of iterated and randomized rounding

The lecture was held within the framework of the follow-up workshop to the Hausdorff Trimester Program: Combinatorial Optimization. We describe a new rounding procedure that optimally combines the benefits of both iterated rounding and randomized rounding. A nice feature of this procedure

From playlist Follow-Up-Workshop "Combinatorial Optimization"

Spectral properties of steplength selections in gradient (...) - Zanni - Workshop 1 - CEB T1 2019

Zanni (Univ. Modena) / 08.02.2019 Spectral properties of steplength selections in gradient methods: from unconstrained to constrained optimization The steplength selection strategies have a remarkable effect on the efficiency of gradient-based methods for both unconstrained and constrai

From playlist 2019 - T1 - The Mathematics of Imaging

Physical insights from a numerical simulation of the dissipative Euler flow by Takeshi Matsumoto

PROGRAM TURBULENCE: PROBLEMS AT THE INTERFACE OF MATHEMATICS AND PHYSICS ORGANIZERS Uriel Frisch (Observatoire de la Côte d'Azur and CNRS, France), Konstantin Khanin (University of Toronto, Canada) and Rahul Pandit (IISc, India) DATE & TIME 16 January 2023 to 27 January 2023 VENUE Ramanuj

From playlist Turbulence: Problems at the Interface of Mathematics and Physics 2023

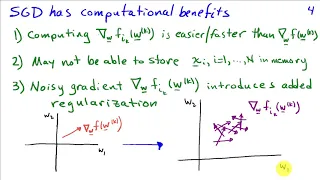

Jorge Nocedal: "Tutorial on Optimization Methods for Machine Learning, Pt. 1"

Graduate Summer School 2012: Deep Learning, Feature Learning "Tutorial on Optimization Methods for Machine Learning, Pt. 1" Jorge Nocedal, Northwestern University Institute for Pure and Applied Mathematics, UCLA July 19, 2012 For more information: https://www.ipam.ucla.edu/programs/summ

From playlist GSS2012: Deep Learning, Feature Learning

Generalized Bisection Method for Systems of Nonlinear Equations

Generalization of the Bisection Method for solving systems of equations. This lesson explains the algorithm for a 2 dimension example based on Harvey-Stenger's approach using bisecting triangles. It includes a visualization of the method in action on an example nonlinear system. Other meth

From playlist Solving Systems of Nonlinear Equations

Kavita Ramanan : Asymptotics of r-to-p norms for random matrices

Recording during the meeting "Spectra, Algorithms and Random Walks on Random Networks " the January 16, 2019 at the Centre International de Rencontres Mathématiques (Marseille, France) Filmmaker: Guillaume Hennenfent Find this video and other talks given by worldwide mathematicians on CIRM

From playlist Probability and Statistics

Peter Benner: Matrix Equations and Model Reduction, Lecture 5

Peter Benner from the Max Planck Institute presents: Matrix Equations and Model Reduction; Lecture 5

From playlist Gene Golub SIAM Summer School Videos

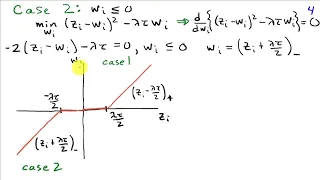

Lieven Vandenberghe: "Bregman proximal methods for semidefinite optimization."

Intersections between Control, Learning and Optimization 2020 "Bregman proximal methods for semidefinite optimization." Lieven Vandenberghe - University of California, Los Angeles (UCLA) Abstract: We discuss first-order methods for semidefinite optimization, based on non-Euclidean projec

From playlist Intersections between Control, Learning and Optimization 2020

Mitsuhiro Shishikura: Renormalization in complex dynamics

HYBRID EVENT Recorded during the meeting "Advancing Bridges in Complex Dynamics" the September 21, 2021 by the Centre International de Rencontres Mathématiques (Marseille, France) Filmmaker: Guillaume Hennenfent Find this video and other talks given by worldwide mathematicians on CIRM

From playlist Virtual Conference

Lecture 02-01 Linear Regression with multiple variables

Machine Learning by Andrew Ng [Coursera] 0201 Multiple features 0202 Gradient descent for multiple variables 0203 Gradient descent in practice I: Feature Scaling 0204 Gradient descent in practice II: Learning rate 0205 Features and polynomial regression 0206 Normal equation 0207 Normal eq

From playlist Machine Learning by Professor Andrew Ng

OpenAI tackles Math - Formal Mathematics Statement Curriculum Learning (Paper Explained)

#openai #math #imo Formal mathematics is a challenging area for both humans and machines. For humans, formal proofs require very tedious and meticulous specifications of every last detail and results in very long, overly cumbersome and verbose outputs. For machines, the discreteness and s

From playlist Papers Explained