C34 Expanding this method to higher order linear differential equations

I this video I expand the method of the variation of parameters to higher-order (higher than two), linear ODE's.

From playlist Differential Equations

(8.1) A General Approach to Nonlinear Differential Questions

This video briefly describes the approach to gaining information about the solution to nonlinear differential equations. https://mathispower4u.com

From playlist Differential Equations: Complete Set of Course Videos

C07 Homogeneous linear differential equations with constant coefficients

An explanation of the method that will be used to solve for higher-order, linear, homogeneous ODE's with constant coefficients. Using the auxiliary equation and its roots.

From playlist Differential Equations

Business Math - Linear Programming - General Solution : Optimization (2 of 6) Basic Ex. 2

Visit http://ilectureonline.com for more math and science lectures! In this video I will graph and demonstrate the general approach to minimize cost. Next video in this series can be seen at: http://youtu.be/oN9uLhuQq6o

From playlist BUSINESS MATH 3 - LINEAR PROGRAMMING GENERAL SOLUTION

Linear regression is used to compare sets or pairs of numerical data points. We use it to find a correlation between variables.

From playlist Learning medical statistics with python and Jupyter notebooks

Least squares method for simple linear regression

In this video I show you how to derive the equations for the coefficients of the simple linear regression line. The least squares method for the simple linear regression line, requires the calculation of the intercept and the slope, commonly written as beta-sub-zero and beta-sub-one. Deriv

From playlist Machine learning

Intro to Linear Systems: 2 Equations, 2 Unknowns - Dr Chris Tisdell Live Stream

Free ebook http://tinyurl.com/EngMathYT Basic introduction to linear systems. We discuss the case with 2 equations and 2 unknowns. A linear system is a mathematical model of a system based on the use of a linear operator. Linear systems typically exhibit features and properties that ar

From playlist Intro to Linear Systems

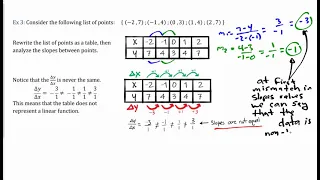

Define linear functions. Use function notation to evaluate linear functions. Learn to identify linear function from data, graphs, and equations.

From playlist Algebra 1

Aaron Sidford: Introduction to interior point methods for discrete optimization, lecture I

Over the past decade interior point methods (IPMs) have played a pivotal role in mul- tiple algorithmic advances. IPMs have been leveraged to obtain improved running times for solving a growing list of both continuous and combinatorial optimization problems including maximum flow, bipartit

From playlist Summer School on modern directions in discrete optimization

Mod-01 Lec-01 Introduction and Overview

Advanced Numerical Analysis by Prof. Sachin C. Patwardhan,Department of Chemical Engineering,IIT Bombay.For more details on NPTEL visit http://nptel.ac.in

From playlist IIT Bombay: Advanced Numerical Analysis | CosmoLearning.org

Lieven Vandenberghe: "Bregman proximal methods for semidefinite optimization."

Intersections between Control, Learning and Optimization 2020 "Bregman proximal methods for semidefinite optimization." Lieven Vandenberghe - University of California, Los Angeles (UCLA) Abstract: We discuss first-order methods for semidefinite optimization, based on non-Euclidean projec

From playlist Intersections between Control, Learning and Optimization 2020

Optimisation - an introduction: Professor Coralia Cartis, University of Oxford

Coralia Cartis (BSc Mathematics, Babesh-Bolyai University, Romania; PhD Mathematics, University of Cambridge (2005)) has joined the Mathematical Institute at Oxford and Balliol College in 2013 as Associate Professor in Numerical Optimization. Previously, she worked as a research scientist

From playlist Data science classes

Chi-Wang Shu: Stability of time discretizations for semi-discrete high order schemes for...

Recorded for the virtual meeting meeting "Numerical Methods for Kinetic Equations" the May 14, 2021 by the Centre International de Rencontres Mathématiques (Marseille, France) Filmmaker: Guillaume Hennenfent When designing high order schemes for solving time-dependent kinetic and related

From playlist Numerical Analysis and Scientific Computing

Valeria Simoncini: Computational methods for large-scale matrix equations and application to PDEs

Linear matrix equations such as the Lyapunov and Sylvester equations and their generalizations have classically played an important role in the analysis of dynamical systems, in control theory and in eigenvalue computation. More recently, matrix equations have emerged as a natural linear a

From playlist Numerical Analysis and Scientific Computing

Frank Noé: "Fundamentals of Artificial Intelligence and Machine Learning" (Part 1/2)

Watch part 2/2 here: https://youtu.be/gSLQ_2uFSiA Mathematical Challenges and Opportunities for Autonomous Vehicles Tutorials 2020 "Fundamentals of Artificial Intelligence and Machine Learning" (Part 1/2) Frank Noé - Freie Universität Berlin Institute for Pure and Applied Mathematics, U

From playlist Mathematical Challenges and Opportunities for Autonomous Vehicles 2020

Twenty third SIAM Activity Group on FME Virtual Talk Series

Date: Thursday, December 2, 2021, 1PM-2PM ET Speaker 1: Renyuan Xu, University of Southern California Speaker 2: Philippe Casgrain, ETH Zurich and Princeton University Moderator: Ronnie Sircar, Princeton Universit Join us for a series of online talks on topics related to mathematical fina

From playlist SIAM Activity Group on FME Virtual Talk Series

M. Grazia Speranza: "Fundamentals of optimization" (Part 1/2)

Watch part 2/2 here: https://youtu.be/ZJA4B2IePis Mathematical Challenges and Opportunities for Autonomous Vehicles Tutorials 2020 "Fundamentals of optimization" (Part 1/2) M. Grazia Speranza - University of Brescia Institute for Pure and Applied Mathematics, UCLA September 22, 2020 Fo

From playlist Mathematical Challenges and Opportunities for Autonomous Vehicles 2020

3 Ways to Build a Model for Control System Design | Understanding PID Control, Part 5

Tuning a PID controller requires that you have a representation of the system you’re trying to control. This could be the physical hardware or a mathematical representation of that hardware. If you have physical hardware, you could guess at some PID gains, run a test to see how it perfor

From playlist Understanding PID Control