(ML 19.2) Existence of Gaussian processes

Statement of the theorem on existence of Gaussian processes, and an explanation of what it is saying.

From playlist Machine Learning

(ML 19.1) Gaussian processes - definition and first examples

Definition of a Gaussian process. Elementary examples of Gaussian processes.

From playlist Machine Learning

Approximating Functions in a Metric Space

Approximations are common in many areas of mathematics from Taylor series to machine learning. In this video, we will define what is meant by a best approximation and prove that a best approximation exists in a metric space. Chapters 0:00 - Examples of Approximation 0:46 - Best Aproximati

From playlist Approximation Theory

Gaussian Integral 7 Wallis Way

Welcome to the awesome 12-part series on the Gaussian integral. In this series of videos, I calculate the Gaussian integral in 12 different ways. Which method is the best? Watch and find out! In this video, I calculate the Gaussian integral by using a technique that is very similar to the

From playlist Gaussian Integral

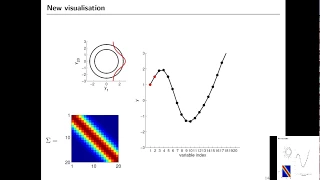

(ML 19.3) Examples of Gaussian processes (part 1)

Illustrative examples of several Gaussian processes, and visualization of samples drawn from these Gaussian processes. (Random planes, Brownian motion, squared exponential GP, Ornstein-Uhlenbeck, a periodic GP, and a symmetric GP).

From playlist Machine Learning

(ML 19.4) Examples of Gaussian processes (part 2)

Illustrative examples of several Gaussian processes, and visualization of samples drawn from these Gaussian processes. (Random planes, Brownian motion, squared exponential GP, Ornstein-Uhlenbeck, a periodic GP, and a symmetric GP).

From playlist Machine Learning

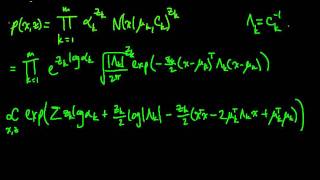

(ML 16.7) EM for the Gaussian mixture model (part 1)

Applying EM (Expectation-Maximization) to estimate the parameters of a Gaussian mixture model. Here we use the alternate formulation presented for (unconstrained) exponential families.

From playlist Machine Learning

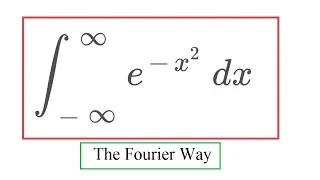

Gaussian Integral 10 Fourier Way

Welcome to the awesome 12-part series on the Gaussian integral. In this series of videos, I calculate the Gaussian integral in 12 different ways. Which method is the best? Watch and find out! In this video, I show how the Gaussian integral appears in the Fourier transform: Namely if you t

From playlist Gaussian Integral

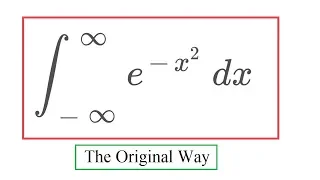

Gaussian Integral 8 Original Way

Welcome to the awesome 12-part series on the Gaussian integral. In this series of videos, I calculate the Gaussian integral in 12 different ways. Which method is the best? Watch and find out! In this video, I present the classical way using polar coordinates, the one that Laplace original

From playlist Gaussian Integral

From playlist COMP0168 (2020/21)

Slides and more information: https://mml-book.github.io/slopes-expectations.html

From playlist There and Back Again: A Tale of Slopes and Expectations (NeurIPS-2020 Tutorial)

Slides and more information: https://mml-book.github.io/slopes-expectations.html

From playlist There and Back Again: A Tale of Slopes and Expectations (NeurIPS-2020 Tutorial)

From playlist COMP0168 (2020/21)

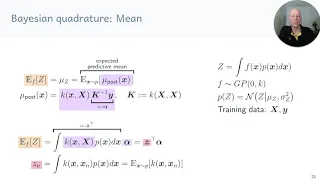

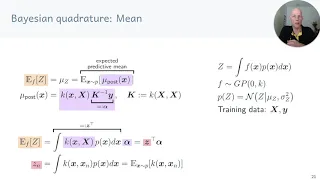

ML Tutorial: Gaussian Processes (Richard Turner)

Machine Learning Tutorial at Imperial College London: Gaussian Processes Richard Turner (University of Cambridge) November 23, 2016

From playlist Machine Learning Tutorials

ML Tutorial: Probabilistic Numerical Methods (Jon Cockayne)

Machine Learning Tutorial at Imperial College London: Probabilistic Numerical Methods Jon Cockayne (University of Warwick) February 22, 2017

From playlist Machine Learning Tutorials

Maxim Raginsky: "A mean-field theory of lazy training in two-layer neural nets"

High Dimensional Hamilton-Jacobi PDEs 2020 Workshop II: PDE and Inverse Problem Methods in Machine Learning "A mean-field theory of lazy training in two-layer neural nets: entropic regularization and controlled McKean-Vlasov dynamics" Maxim Raginsky - University of Illinois at Urbana-Cham

From playlist High Dimensional Hamilton-Jacobi PDEs 2020

Pathwise Conditioning and Non-Euclidean Gaussian Processes

A Google TechTalk, presented by Alexander Terenin, 2022-11-28 BayesOpt Speaker Series. ABSTRACT: In Gaussian processes, conditioning and computation of posterior distributions is usually done in a distributional fashion by working with finite-dimensional marginals. However, there is anoth

From playlist Google BayesOpt Speaker Series 2021-2022

Bayesian optimisation for likelihood-free cosmological (...) - Leclercq - Workshop 2 - CEB T3 2018

Leclercq (Imperial College) / 22.10.2018 Bayesian optimisation for likelihood-free cosmological inference ---------------------------------- Vous pouvez nous rejoindre sur les réseaux sociaux pour suivre nos actualités. Facebook : https://www.facebook.com/InstitutHenriPoincare/ Twitter

From playlist 2018 - T3 - Analytics, Inference, and Computation in Cosmology

From playlist COMP0168 (2020/21)

PUSHING A GAUSSIAN TO THE LIMIT

Integrating a gaussian is everyones favorite party trick. But it can be used to describe something else. Link to gaussian integral: https://www.youtube.com/watch?v=mcar5MDMd_A Link to my Skype Tutoring site: dotsontutoring.simplybook.me or email dotsontutoring@gmail.com if you have ques

From playlist Math/Derivation Videos