From playlist k-Nearest Neighbor Algorithm

k nearest neighbor (kNN): how it works

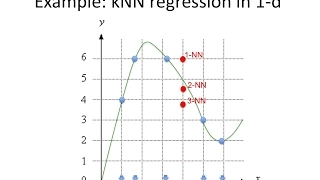

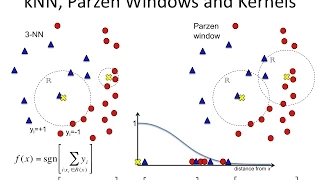

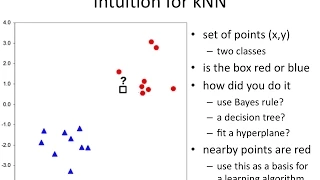

[http://bit.ly/k-NN] The k-nearest neighbor (k-NN) algorithm is based on the intuition that similar instances should have similar class labels (in classification) or similar target values (regression). The algorithm is very simple, but is capable of learning highly-complex non-linear decis

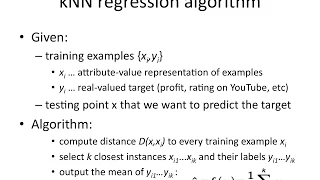

From playlist Nearest Neighbour Methods

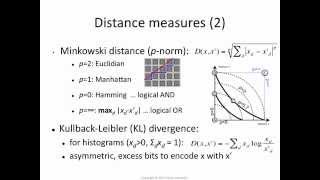

k-NN 4: which distance function?

[http://bit.ly/k-NN] The nearest-neighbour algorithm is sensitive to the choice of distance function. Euclidean distance (L2) is a common choice, but it may lead to sub-optimal performance. We discuss Minkowski (p-norm) distance functions, which generalise the Euclidean distance, and can a

From playlist Nearest Neighbour Methods

From playlist k-Nearest Neighbor Algorithm

Nexus Trimester - Alex Andoni (Columbia) 2/2

Sketching and Embeddings 2/2 Alex Andoni (Columbia) March 11, 2016 Abstract: Sketching for distance estimation is the problem where we need to design a possibly randomized function f from a metric space to short strings, such that from f(x) and f(y) we can estimate the distance between x

From playlist 2016-T1 - Nexus of Information and Computation Theory - CEB Trimester

Machine Learning Interview Questions And Answers | Data Science Interview Questions | Simplilearn

🔥 Enroll for FREE Machine Learning Course & Get your Completion Certificate: https://www.simplilearn.com/learn-machine-learning-basics-skillup?utm_campaign=MachineLearning&utm_medium=Description&utm_source=youtube This Machine Learning Interview Questions And Answers video will help you

From playlist Machine Learning with Python | Complete Machine Learning Tutorial | Simplilearn [2022 Updated]

Classification In Machine Learning | Machine Learning Tutorial | Python Training | Simplilearn

🔥Artificial Intelligence Engineer Program (Discount Coupon: YTBE15): https://www.simplilearn.com/masters-in-artificial-intelligence?utm_campaign=ClassificationInMachineLearning-xG-E--Ak5jg&utm_medium=Descriptionff&utm_source=youtube 🔥Professional Certificate Program In AI And Machine Learn

Machine Learning Fundamentals: The Confusion Matrix

One of the fundamental concepts in machine learning is the Confusion Matrix. Combined with Cross Validation, it's how we decide which machine learning method would be best for our dataset. Check out the video to find out how! NOTE: This video illustrates the confusion matrix concept as de

From playlist StatQuest

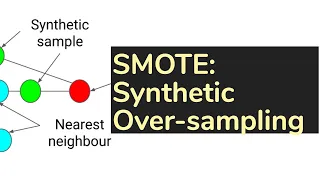

TDLS - Classics: SMOTE, Synthetic Minority Over-sampling Technique (algorithm)

Toronto Deep Learning Series, 26 November 2018 Paper: https://arxiv.org/pdf/1106.1813.pdf Speaker: Jason Grunhut (Telus Digital) Host: Telus Digital Date: Nov 26th, 2018 SMOTE: Synthetic Minority Over-sampling Technique An approach to the construction of classifiers from imbalanced da

From playlist Math and Foundations

KNN Algorithm In Machine Learning | KNN Algorithm Using Python | K Nearest Neighbor | Simplilearn

🔥Artificial Intelligence Engineer Program (Discount Coupon: YTBE15): https://www.simplilearn.com/masters-in-artificial-intelligence?utm_campaign=KNNInMLMachineLearning&utm_medium=Descriptionff&utm_source=youtube 🔥Professional Certificate Program In AI And Machine Learning: https://www.simp

From playlist Machine Learning with Python | Complete Machine Learning Tutorial | Simplilearn [2022 Updated]

MIT 6.0002 Introduction to Computational Thinking and Data Science, Fall 2016 View the complete course: http://ocw.mit.edu/6-0002F16 Instructor: John Guttag Prof. Guttag introduces supervised learning with nearest neighbor classification using feature scaling and decision trees. License:

From playlist MIT 6.0002 Introduction to Computational Thinking and Data Science, Fall 2016

TensorFuzz: Debugging Neural Networks with Coverage-Guided Fuzzing | AISC

For slides and more information on the paper, visit https://aisc.ai.science/events/2019-08-26 Discussion lead: Tahseen Shabab Motivation: Machine learning models are notoriously difficult to interpret and debug. This is particularly true of neural networks. In this work, we introduce a

From playlist Architecture Tuning

Class - 7 Data Science Training | K-Nearest Neighbors (KNN) Algorithm Tutorial | Edureka

(Edureka Meetup Community: http://bit.ly/2KMqgvf) Join our Meetup community and get access to 100+ tech webinars/ month for FREE: http://bit.ly/2KMqgvf Topics to be covered in this session: 1. Introduction To Classification Algorithms 2. What Is Random Forest? 3. Understanding Random For

From playlist Data Science Training Videos | Edureka Live Classes

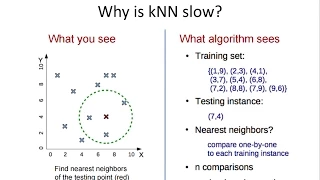

[http://bit.ly/k-NN] k-NN algorithm is computationally expensive because we need to compute the distance of each testing instance from every training instance. There is no exact algorithm for doing this quickly, but we do have approximate methods: K-D trees for low-dimensional data, invert

From playlist Nearest Neighbour Methods