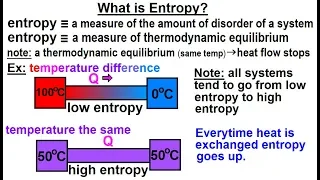

Physics - Thermodynamics 2: Ch 32.7 Thermo Potential (10 of 25) What is Entropy?

Visit http://ilectureonline.com for more math and science lectures! In this video explain and give examples of what is entropy. 1) entropy is a measure of the amount of disorder (randomness) of a system. 2) entropy is a measure of thermodynamic equilibrium. Low entropy implies heat flow t

From playlist PHYSICS 32.7 THERMODYNAMIC POTENTIALS

A better description of entropy

I use this stirling engine to explain entropy. Entropy is normally described as a measure of disorder but I don't think that's helpful. Here's a better description. Visit my blog here: http://stevemould.com Follow me on twitter here: http://twitter.com/moulds Buy nerdy maths things here:

From playlist Best of

Entropy is often taught as a measure of how disordered or how mixed up a system is, but this definition never really sat right with me. How is "disorder" defined and why is one way of arranging things any more disordered than another? It wasn't until much later in my physics career that I

From playlist Thermal Physics/Statistical Physics

The lecture was held within the framework of the Hausdorff Trimester Program "Dynamics: Topology and Numbers": Conference on “Transfer operators in number theory and quantum chaos” Abstract: In many classical compact settings, entropy is upper semicontinuous, i.e., given a con

From playlist Conference: Transfer operators in number theory and quantum chaos

Entropy production during free expansion of an ideal gas by Subhadip Chakraborti

Abstract: According to the second law, the entropy of an isolated system increases during its evolution from one equilibrium state to another. The free expansion of a gas, on removal of a partition in a box, is an example where we expect to see such an increase of entropy. The constructi

From playlist Seminar Series

Maxwell-Boltzmann distribution

Entropy and the Maxwell-Boltzmann velocity distribution. Also discusses why this is different than the Bose-Einstein and Fermi-Dirac energy distributions for quantum particles. My Patreon page is at https://www.patreon.com/EugeneK 00:00 Maxwell-Boltzmann distribution 02:45 Higher Temper

From playlist Physics

(IC 3.1) Entropy as a lower bound on expected length (part 1)

The expected codeword length of a symbol code is bounded below by the entropy of the source. A playlist of these videos is available at: http://www.youtube.com/playlist?list=PLE125425EC837021F

From playlist Information theory and Coding

Topics in Combinatorics lecture 10.0 --- The formula for entropy

In this video I present the formula for the entropy of a random variable that takes values in a finite set, prove that it satisfies the entropy axioms, and prove that it is the only formula that satisfies the entropy axioms. 0:00 The formula for entropy and proof that it satisfies the ax

From playlist Topics in Combinatorics (Cambridge Part III course)

Breaking of ensemble equivalence in complex networks by Andrea Roccaverde

Large deviation theory in statistical physics: Recent advances and future challenges DATE: 14 August 2017 to 13 October 2017 VENUE: Madhava Lecture Hall, ICTS, Bengaluru Large deviation theory made its way into statistical physics as a mathematical framework for studying equilibrium syst

From playlist Large deviation theory in statistical physics: Recent advances and future challenges

Breaking of Ensemble Equivalence in dense random graphs by Nicos Starreveld

Large deviation theory in statistical physics: Recent advances and future challenges DATE: 14 August 2017 to 13 October 2017 VENUE: Madhava Lecture Hall, ICTS, Bengaluru Large deviation theory made its way into statistical physics as a mathematical framework for studying equilibrium syst

From playlist Large deviation theory in statistical physics: Recent advances and future challenges

Stanford CS330: Multi-Task and Meta-Learning, 2019 | Lecture 5 - Bayesian Meta-Learning

For more information about Stanford’s Artificial Intelligence professional and graduate programs, visit: https://stanford.io/ai Assistant Professor Chelsea Finn, Stanford University http://cs330.stanford.edu/

From playlist Stanford CS330: Deep Multi-Task and Meta Learning

Statistical Mechanics of Athermal Systems: Edwards Ensemble, ... (Lecture 2) by Bulbul Chakraborty

PROGRAM ENTROPY, INFORMATION AND ORDER IN SOFT MATTER ORGANIZERS: Bulbul Chakraborty, Pinaki Chaudhuri, Chandan Dasgupta, Marjolein Dijkstra, Smarajit Karmakar, Vijaykumar Krishnamurthy, Jorge Kurchan, Madan Rao, Srikanth Sastry and Francesco Sciortino DATE: 27 August 2018 to 02 Novemb

From playlist Entropy, Information and Order in Soft Matter

Stanford CS330: Deep Multi-task & Meta Learning I 2021 I Lecture 7

For more information about Stanford's Artificial Intelligence professional and graduate programs visit: https://stanford.io/ai To follow along with the course, visit: http://cs330.stanford.edu/fall2021/index.html To view all online courses and programs offered by Stanford, visit: http:/

From playlist Stanford CS330: Deep Multi-Task & Meta Learning I Autumn 2021I Professor Chelsea Finn

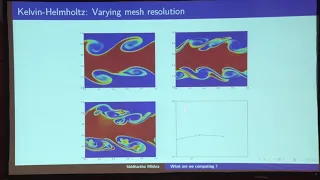

On convergence of numerical schemes for hyperbolic systems of conservation – S. Mishra – ICM2018

Numerical Analysis and Scientific Computing Invited Lecture 15.9 On the convergence of numerical schemes for hyperbolic systems of conservation laws Siddhartha Mishra Abstract: A large variety of efficient numerical methods, of the finite volume, finite difference and DG type, have been

From playlist Numerical Analysis and Scientific Computing

Statistical Mechanics of Athermal Systems: Edwards Ensemble, Entropy... (Lecture 3)

PROGRAM ENTROPY, INFORMATION AND ORDER IN SOFT MATTER ORGANIZERS: Bulbul Chakraborty, Pinaki Chaudhuri, Chandan Dasgupta, Marjolein Dijkstra, Smarajit Karmakar, Vijaykumar Krishnamurthy, Jorge Kurchan, Madan Rao, Srikanth Sastry and Francesco Sciortino DATE: 27 August 2018 to 02 Novemb

From playlist Entropy, Information and Order in Soft Matter

Stanford CS330: Deep Multi-task and Meta Learning | 2020 | Lecture 8 - Bayesian Meta-Learning

For more information about Stanford’s Artificial Intelligence professional and graduate programs, visit: https://stanford.io/ai To follow along with the course, visit: https://cs330.stanford.edu/ To view all online courses and programs offered by Stanford, visit: http://online.stanford.

From playlist Stanford CS330: Deep Multi-task and Meta Learning | Autumn 2020

Andrew White: "Maximum Entropy Methods for Combining Physics-Based Simulation with Empirical Data"

Machine Learning for Physics and the Physics of Learning 2019 Workshop II: Interpretable Learning in Physical Sciences "Maximum Entropy Methods for Combining Physics-Based Simulation with Empirical Data" Andrew White, University of Rochester Abstract: Physics-based simulation models like

From playlist Machine Learning for Physics and the Physics of Learning 2019

The ENTROPY EQUATION and its Applications | Thermodynamics and Microstates EXPLAINED

Entropy is a hotly discussed topic... but how can we actually CALCULATE the entropy of a system? (Note: The written document discussed here can be found in the pinned comment below!) Hey everyone, I'm back with a new video, and this time it's a bit different to my usual ones! In this vid

From playlist Thermodynamics by Parth G

[Live Machine Learning Research] Plain Self-Ensembles (I actually DISCOVER SOMETHING) - Part 1

I share my progress of implementing a research idea from scratch. I attempt to build an ensemble model out of students of label-free self-distillation without any additional data or augmentation. Turns out, it actually works, and interestingly, the more students I employ, the better the ac

From playlist General Machine Learning

Teach Astronomy - Entropy of the Universe

http://www.teachastronomy.com/ The entropy of the universe is a measure of its disorder or chaos. If the laws of thermodynamics apply to the universe as a whole as they do to individual objects or systems within the universe, then the fate of the universe must be to increase in entropy.

From playlist 23. The Big Bang, Inflation, and General Cosmology 2