A better description of entropy

I use this stirling engine to explain entropy. Entropy is normally described as a measure of disorder but I don't think that's helpful. Here's a better description. Visit my blog here: http://stevemould.com Follow me on twitter here: http://twitter.com/moulds Buy nerdy maths things here:

From playlist Best of

An intro to the core protocols of the Internet, including IPv4, TCP, UDP, and HTTP. Part of a larger series teaching programming. See codeschool.org

From playlist The Internet

Entropy is often taught as a measure of how disordered or how mixed up a system is, but this definition never really sat right with me. How is "disorder" defined and why is one way of arranging things any more disordered than another? It wasn't until much later in my physics career that I

From playlist Thermal Physics/Statistical Physics

Star Network - Intro to Algorithms

This video is part of an online course, Intro to Algorithms. Check out the course here: https://www.udacity.com/course/cs215.

From playlist Introduction to Algorithms

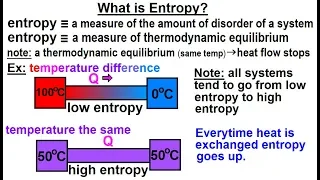

Physics - Thermodynamics 2: Ch 32.7 Thermo Potential (10 of 25) What is Entropy?

Visit http://ilectureonline.com for more math and science lectures! In this video explain and give examples of what is entropy. 1) entropy is a measure of the amount of disorder (randomness) of a system. 2) entropy is a measure of thermodynamic equilibrium. Low entropy implies heat flow t

From playlist PHYSICS 32.7 THERMODYNAMIC POTENTIALS

This video is part of an online course, Intro to Algorithms. Check out the course here: https://www.udacity.com/course/cs215.

From playlist Introduction to Algorithms

From playlist Week 9: Social Networks

The ENTROPY EQUATION and its Applications | Thermodynamics and Microstates EXPLAINED

Entropy is a hotly discussed topic... but how can we actually CALCULATE the entropy of a system? (Note: The written document discussed here can be found in the pinned comment below!) Hey everyone, I'm back with a new video, and this time it's a bit different to my usual ones! In this vid

From playlist Thermodynamics by Parth G

Erik Bollt - Identify Interactions in Complex Networked Dynamical Systems through Causation Entropy

Recorded 30 August 2022. Erik Bollt of Clarkson University Math/ECE presents "Identifying Interactions in Complex Networked Dynamical Systems through Causation Entropy" at IPAM's Reconstructing Network Dynamics from Data: Applications to Neuroscience and Beyond. Abstract: Inferring the cou

From playlist 2022 Reconstructing Network Dynamics from Data: Applications to Neuroscience and Beyond

Neural Networks Part 7: Cross Entropy Derivatives and Backpropagation

Here is a step-by-step guide that shows you how to take the derivative of the Cross Entropy function for Neural Networks and then shows you how to use that derivative for Backpropagation. NOTE: This StatQuest assumes that you are already familiar with... The main ideas behind neural netwo

From playlist StatQuest

Neural Networks Part 6: Cross Entropy

When a Neural Network is used for classification, we usually evaluate how well it fits the data with Cross Entropy. This StatQuest gives you and overview of how to calculate Cross Entropy and Total Cross Entropy. NOTE: This StatQuest assumes that you are already familiar with... The main

From playlist StatQuest

SupSup: Supermasks in Superposition (Paper Explained)

Supermasks are binary masks of a randomly initialized neural network that result in the masked network performing well on a particular task. This paper considers the problem of (sequential) Lifelong Learning and trains one Supermask per Task, while keeping the randomly initialized base net

From playlist Papers Explained

Cory Hauck: Approximate entropy-based moment closures

Recorded during the meeting "Numerical Methods for Kinetic Equations 'NumKin21' " the June 17, 2021 by the Centre International de Rencontres Mathématiques (Marseille, France) Filmmaker: Jean Petit Find this video and other talks given by worldwide mathematicians on CIRM's Audiovisual M

From playlist Virtual Conference

Supervised Contrastive Learning

The cross-entropy loss has been the default in deep learning for the last few years for supervised learning. This paper proposes a new loss, the supervised contrastive loss, and uses it to pre-train the network in a supervised fashion. The resulting model, when fine-tuned to ImageNet, achi

From playlist General Machine Learning

Unsupervised domain adaptation with application to urban (...) - Pérez - Workshop 3 - CEB T1 2019

Patrick Pérez (Valeo) / 04.04.2019 nsupervised domain adaptation with application to urban scene analysis. In numerous real world applications, no matter how much energy is devoted to build real and/or synthetic training datasets, there remains a large distribution gap between these dat

From playlist 2019 - T1 - The Mathematics of Imaging

Riccardo Zecchina: "Evidence for local entropy optimization in machine learning, physics and neu..."

Machine Learning for Physics and the Physics of Learning 2019 Workshop IV: Using Physical Insights for Machine Learning "Evidence for local entropy optimization in machine learning, physics and neuroscience" Riccardo Zecchina - Bocconi University Institute for Pure and Applied Mathemat

From playlist Machine Learning for Physics and the Physics of Learning 2019

Entropy is a very weird and misunderstood quantity. Hopefully, this video can shed some light on the "disorder" we find ourselves in... ________________________________ More videos at: http://www.youtube.com/TheScienceAsylum T-Shirts: http://scienceasylum.spreadshirt.com/ Facebook: http:/

From playlist Thermodynamics

Danielle Bassett - Probing the costly dynamics of cognitive effort - IPAM at UCLA

Recorded 01 September 2022. Danielle Bassett of the University of Pennsylvania presents "Probing the costly dynamics of cognitive effort" at IPAM's Reconstructing Network Dynamics from Data: Applications to Neuroscience and Beyond. Abstract: Cognitive effort has long been an important expl

From playlist 2022 Reconstructing Network Dynamics from Data: Applications to Neuroscience and Beyond