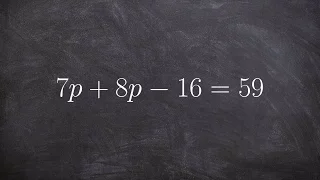

Solving an equation with variable on the same side

👉 Learn how to solve two step linear equations. A linear equation is an equation whose highest exponent on its variable(s) is 1. To solve for a variable in a two step linear equation, we first isolate the variable by using inverse operations (addition or subtraction) to move like terms to

From playlist Solve Two Step Equations with Two Variables

How to solve a linear equation when there are no solutions

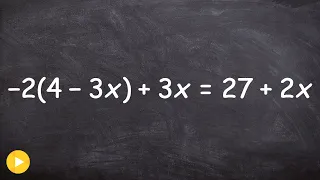

👉 Learn how to solve multi-step equations with parenthesis and variable on both sides of the equation. An equation is a statement stating that two values are equal. A multi-step equation is an equation which can be solved by applying multiple steps of operations to get to the solution. To

From playlist How to Solve Multi Step Equations with Parenthesis on Both Sides

How to solve a multi step equation with a variable on both sides

👉 Learn how to solve multi-step equations with parenthesis and variable on both sides of the equation. An equation is a statement stating that two values are equal. A multi-step equation is an equation which can be solved by applying multiple steps of operations to get to the solution. To

From playlist How to Solve Multi Step Equations with Parenthesis on Both Sides

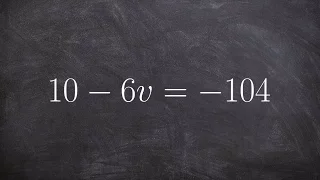

How to solve simple two step equations

👉 Learn how to solve two step linear equations. A linear equation is an equation whose highest exponent on its variable(s) is 1. To solve for a variable in a two step linear equation, we first isolate the variable by using inverse operations (addition or subtraction) to move like terms to

From playlist Solve Two Step Equations with a Fraction

Workshop on Theory of Deep Learning: Where next? Topic: Spotlight Talks Speaker: Vaishnavh Nagarajan, Preetum Nakkiran, Xiaowu Dai, Weijie Su Date: October 16, 2019 For more video please visit http://video.ias.edu

From playlist Mathematics

Statistical Learning: 10.7 Interpolation and Double Descent

Statistical Learning, featuring Deep Learning, Survival Analysis and Multiple Testing You are able to take Statistical Learning as an online course on EdX, and you are able to choose a verified path and get a certificate for its completion: https://www.edx.org/course/statistical-learning

From playlist Statistical Learning

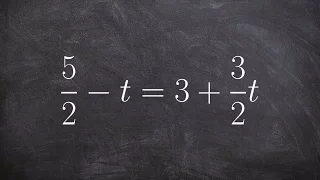

Solving a multi-step equation by multiplying by the denominator

👉 Learn how to solve multi-step equations with variable on both sides of the equation. An equation is a statement stating that two values are equal. A multi-step equation is an equation which can be solved by applying multiple steps of operations to get to the solution. To solve a multi-s

From playlist How to Solve Multi Step Equations with Variables on Both Sides

[LIVE] Rasa Reading Group: Grokking: Generalisation beyond overfitting on small algorithmic datasets

The Reading Group is back for special edition! Join us as we read an ML paper together live. This week we'll be reading the paper "Grokking: Generalisation beyond overfitting on small algorithmic datasets" by Alethea Power, Yuri Burda, Harri Edwards, Igor Babuschkin & Vedant Misra from Ope

From playlist Rasa Reading Group

Solving for X in an equation using subtraction and division

👉 Learn how to solve two step linear equations. A linear equation is an equation whose highest exponent on its variable(s) is 1. To solve for a variable in a two step linear equation, we first isolate the variable by using inverse operations (addition or subtraction) to move like terms to

From playlist Solve Two Step Equations

Solving a linear equation with a variable divided by a number

👉 Learn how to solve two step linear equations. A linear equation is an equation whose highest exponent on its variable(s) is 1. To solve for a variable in a two step linear equation, we first isolate the variable by using inverse operations (addition or subtraction) to move like terms to

From playlist Solve Two Step Equations with a Fraction

DDPS | A mathematical understanding of modern Machine Learning: theory, algorithms and applications

In this talk from July 15, 2021, Brown University assistant professor Yeonjong Shin discusses the development of robust and reliable machine learning algorithms based on insights gained from the mathematical analysis. Description: Modern machine learning (ML) has achieved unprecedented em

From playlist Data-driven Physical Simulations (DDPS) Seminar Series

Solving and equation with the variable on the same side ex 3, 17=p–3–3p

👉 Learn how to solve two step linear equations. A linear equation is an equation whose highest exponent on its variable(s) is 1. To solve for a variable in a two step linear equation, we first isolate the variable by using inverse operations (addition or subtraction) to move like terms to

From playlist Solve Two Step Equations with Two Variables

Solving a linear equation with a variable on both sides

👉 Learn how to solve multi-step equations with parenthesis and variable on both sides of the equation. An equation is a statement stating that two values are equal. A multi-step equation is an equation which can be solved by applying multiple steps of operations to get to the solution. To

From playlist How to Solve Multi Step Equations with Parenthesis on Both Sides

Gradient Descent, Step-by-Step

Gradient Descent is the workhorse behind most of Machine Learning. When you fit a machine learning method to a training dataset, you're probably using Gradient Descent. It can optimize parameters in a wide variety of settings. Since it's so fundamental to Machine Learning, I decided to mak

From playlist Optimizers in Machine Learning

Machine Learning Lecture 36 "Neural Networks / Deep Learning Continued" -Cornell CS4780 SP17

Lecture Notes: http://www.cs.cornell.edu/courses/cs4780/2018fa/lectures/lecturenote20.pdf

From playlist CORNELL CS4780 "Machine Learning for Intelligent Systems"

Brauer groups, Severi-Brauer schemes, Azumaya algebras, twisted sheaves

From playlist Étale cohomology and the Weil conjectures

Wee Teck Gan - 2/2 Explicit Constructions of Automorphic Forms

I will discuss the theory of theta correspondence, highlighting basic principles and recent results, before explaining how theta correspondence can now be viewed as part of the relative Langlands program. I will then discuss other methods of construction of automorphic forms, such as autom

From playlist 2022 Summer School on the Langlands program

Étale cohomology lecture IV - 9/1/2020

Morphisms of sites, fppf descent part 1

From playlist Étale cohomology and the Weil conjectures

The Seemingly IMPOSSIBLE Cyclist And Motorcycles Riddle

Thanks to Alessio from Italy for creating this problem and sending it to me! A cyclist and a series of motorcycles are racing to the top of a long climb, and the cyclist doubles back. Given how many motorcycles passed the cyclist, can you figure out the motorcycles' speed? (precise wording

From playlist Math Puzzles, Riddles And Brain Teasers

Solving an equation by combining like terms

👉 Learn how to solve two step linear equations. A linear equation is an equation whose highest exponent on its variable(s) is 1. To solve for a variable in a two step linear equation, we first isolate the variable by using inverse operations (addition or subtraction) to move like terms to

From playlist Solve Two Step Equations with Two Variables