Lecture: Unconstrained Optimization (Derivative Methods)

Derivative-based methods are some of the work-horse algorithms of modern optimization, including gradient descent.

From playlist Beginning Scientific Computing

Katya Scheinberg: "Recent advances in Derivative-Free Optimization and its connection to reinfor..."

Machine Learning for Physics and the Physics of Learning 2019 Workshop I: From Passive to Active: Generative and Reinforcement Learning with Physics "Recent advances in Derivative-Free Optimization and its connection to reinforcement learning" Katya Scheinberg, Cornell University Abstrac

From playlist Machine Learning for Physics and the Physics of Learning 2019

Evaluating Limits Definition of Derivative

I work through 2 example evaluating the limit definition of a derivative. Check out http://www.ProfRobBob.com, there you will find my lessons organized by chapters within each subject. If you'd like to make a donation to support my efforts look for the "Tip the Teacher" button on my chann

From playlist Calculus (New)

Calculus: We present a procedure for solving word problems on optimization using derivatives. Examples include the fence problem and the minimum distance from a point to a line problem.

From playlist Calculus Pt 1: Limits and Derivatives

Definition of Derivative Example: f(x) = x + 1/(x+1)

We compute one more example of taking a derivative not by applying derivative rules, but by applying the definition. That is, the limit computation where the slopes of secant lines approach the slope of the tangent line in the limit as h tends to zero. ********************************

From playlist Calculus I (Limits, Derivative, Integrals) **Full Course**

Partial Derivatives and the Gradient of a Function

We've introduced the differential operator before, during a few of our calculus lessons. But now we will be using this operator more and more over the prime symbol we are used to when describing differentiation, as from now on we will frequently be differentiating with respect to a specifi

From playlist Mathematics (All Of It)

Where Does the Definition of the Derivative Come From?

A student asked about the definition of the derivative in class so I derived the definition really quickly. We had just finished covering infinite limits so we were not talking about derivatives yet, but it was such a good question that I thought I should share this here. I hope this hel

From playlist Calculus 1 Exam 1 Playlist

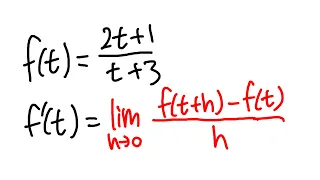

definition of derivative for a rational function

Here we will use the definition of derivative to find the derivative of a rational function. This is a slightly harder calculus 1 problem so be sure you practice it a few times and make sure you can do it on your own. Get a derivative t-shirt: 👉 https://bit.ly/derivativetshirt Use "WELCO

From playlist Sect 2.7, Definition of Derivative

Xavier-Ros Oton: Regularity of free boundaries in obstacle problems Lecture I

Free boundary problems are those described by PDE that exhibit a priori unknown (free) interfaces or boundaries. Such type of problems appear in Physics, Geometry, Probability, Biology, or Finance, and the study of solutions and free boundaries uses methods from PDE, Calculus of Variations

From playlist Hausdorff School: Trending Tools

A geometric integration approach to non-smooth (...) - Schoenlieb/Riis - Workshop 1 - CEB T1 2019

Schoenlieb/Riis (University of Cambridge) / 04.02.2019 A geometric integration approach to non-smooth and non-convex optimisation The optimisation of nonsmooth, nonconvex functions without access to gradients is a particularly challenging problem that is frequently encountered, for exam

From playlist 2019 - T1 - The Mathematics of Imaging

How to compute partial derivatives

Free ebook http://tinyurl.com/EngMathYT Basic examples showing how to compute partial derivatives. Such ideas are seen in multivariable calculus.

From playlist A second course in university calculus.

Can't understand Lagrange Multipliers??

We discuss the idea behind Lagrange Multipliers, why they work, as well as why and when they are useful. External Images Used: 1. https://www.greenbelly.co/pages/contour-lines 2. https://mathoverflow.net/questions/1977/why-is-the-gradient-normal Further Reading: 1. The Variational Pri

From playlist Summer of Math Exposition Youtube Videos

Lecture: Unconstrained Optimization (Derivative-Free Methods)

We introduce some of the basic techniques of optimization that do not require derivative information from the function being optimized, including golden section search and successive parabolic interpolation.

From playlist Beginning Scientific Computing

4 Nandakumaran - An Introduction to deterministic optimal control and controllability

PROGRAM NAME :WINTER SCHOOL ON STOCHASTIC ANALYSIS AND CONTROL OF FLUID FLOW DATES Monday 03 Dec, 2012 - Thursday 20 Dec, 2012 VENUE School of Mathematics, Indian Institute of Science Education and Research, Thiruvananthapuram Stochastic analysis and control of fluid flow problems have

From playlist Winter School on Stochastic Analysis and Control of Fluid Flow

Nando de Freitas: "An Informal Mathematical Tour of Feature Learning, Pt. 3"

Graduate Summer School 2012: Deep Learning, Feature Learning "An Informal Mathematical Tour of Feature Learning, Pt. 3" Nando de Freitas, University of British Columbia Institute for Pure and Applied Mathematics, UCLA July 27, 2012 For more information: https://www.ipam.ucla.edu/program

From playlist GSS2012: Deep Learning, Feature Learning

Levon Nurbekyan: "Computational methods for nonlocal mean field games with applications"

High Dimensional Hamilton-Jacobi PDEs 2020 Workshop III: Mean Field Games and Applications "Computational methods for nonlocal mean field games with applications" Levon Nurbekyan - University of California, Los Angeles (UCLA) Abstract: We introduce a novel framework to model and solve me

From playlist High Dimensional Hamilton-Jacobi PDEs 2020

Definition of the Derivative | Part I

Description: One goal for the derivative is to define the notion of a tangent line to a curve. By approximating the slope of the tangent line by a limit of slopes of secant lines, we arrive at the definition of the derivative. Learning Objectives: 1) Graphically sketch the intuition behi

From playlist Calculus I (Limits, Derivative, Integrals) **Full Course**

Optimisation - an introduction: Professor Coralia Cartis, University of Oxford

Coralia Cartis (BSc Mathematics, Babesh-Bolyai University, Romania; PhD Mathematics, University of Cambridge (2005)) has joined the Mathematical Institute at Oxford and Balliol College in 2013 as Associate Professor in Numerical Optimization. Previously, she worked as a research scientist

From playlist Data science classes