Introduction to the Gradient Theory and Formulas

Introduction to the Gradient Theory and Formulas If you enjoyed this video please consider liking, sharing, and subscribing. You can also help support my channel by becoming a member https://www.youtube.com/channel/UCr7lmzIk63PZnBw3bezl-Mg/join Thank you:)

From playlist Calculus 3

Find the Gradient Vector Field of f(x,y)=x^3y^5

This video explains how to find the gradient of a function. It also explains what the gradient tells us about the function. The gradient is also shown graphically. http://mathispower4u.com

From playlist The Chain Rule and Directional Derivatives, and the Gradient of Functions of Two Variables

Find the Gradient Vector Field of f(x,y)=ln(2x+5y)

This video explains how to find the gradient of a function. It also explains what the gradient tells us about the function. The gradient is also shown graphically. http://mathispower4u.com

From playlist The Chain Rule and Directional Derivatives, and the Gradient of Functions of Two Variables

Ex: Find the Gradient of the Function f(x,y)=xy

This video explains how to find the gradient of a function of two variables. The meaning of the gradient is explained and shown graphically. Site: http://mathispower4u.com

From playlist The Chain Rule and Directional Derivatives, and the Gradient of Functions of Two Variables

Ex: Find the Gradient of the Function f(x,y)=5xsin(xy)

This video explains how to find the gradient of a function of two variables. The meaning of the gradient is explained and shown graphically. Site: http://mathispower4u.com

From playlist The Chain Rule and Directional Derivatives, and the Gradient of Functions of Two Variables

Deriving Gradient in Spherical Coordinates (For Physics Majors)

*Disclaimer* I skipped over some of the more tedious algebra parts. I'm assuming that since you're watching a multivariable calculus video that the algebra isn't the thing you need help with.

From playlist Math/Derivation Videos

Lec 19 | MIT 18.086 Mathematical Methods for Engineers II

Conjugate Gradient Method View the complete course at: http://ocw.mit.edu/18-086S06 License: Creative Commons BY-NC-SA More information at http://ocw.mit.edu/terms More courses at http://ocw.mit.edu

From playlist MIT 18.086 Mathematical Methods for Engineers II, Spring '06

From playlist CS294-112 Deep Reinforcement Learning Sp17

Data assimilation and machine learning by Serge Gratton

DISCUSSION MEETING : STATISTICAL PHYSICS OF MACHINE LEARNING ORGANIZERS : Chandan Dasgupta, Abhishek Dhar and Satya Majumdar DATE : 06 January 2020 to 10 January 2020 VENUE : Madhava Lecture Hall, ICTS Bangalore Machine learning techniques, especially “deep learning” using multilayer n

From playlist Statistical Physics of Machine Learning 2020

Lec 17 | MIT 18.085 Computational Science and Engineering I

Finite difference methods: equilibrium problems A more recent version of this course is available at: http://ocw.mit.edu/18-085f08 License: Creative Commons BY-NC-SA More information at http://ocw.mit.edu/terms More courses at http://ocw.mit.edu

From playlist MIT 18.085 Computational Science & Engineering I, Fall 2007

Find a Derivative Using The Limit Definition(Quadratic)

This video explains how to find the derivative of a quadratic function using the limit definition. Then the slope and equation of a tangent line is found.

From playlist Introduction and Formal Definition of the Derivative

Jorge Nocedal: "Tutorial on Optimization Methods for Machine Learning, Pt. 2"

Graduate Summer School 2012: Deep Learning, Feature Learning "Tutorial on Optimization Methods for Machine Learning, Pt. 2" Jorge Nocedal, Northwestern University Institute for Pure and Applied Mathematics, UCLA July 19, 2012 For more information: https://www.ipam.ucla.edu/programs/summ

From playlist GSS2012: Deep Learning, Feature Learning

Towards a theory of non-commutative optimization...… -Rafael Oliveira

Computer Science/Discrete Mathematics Seminar I Topic: Towards a theory of non-commutative optimization: geodesic 1st and 2nd order methods for moment maps and polytopes Speaker: Rafael Oliveira Affiliation:University of Toronto Date: October 22, 2019 For more video please visit http://v

From playlist Mathematics

Jorge Nocedal: "Tutorial on Optimization Methods for Machine Learning, Pt. 3"

Graduate Summer School 2012: Deep Learning, Feature Learning "Tutorial on Optimization Methods for Machine Learning, Pt. 3" Jorge Nocedal, Northwestern University Institute for Pure and Applied Mathematics, UCLA July 18, 2012 For more information: https://www.ipam.ucla.edu/programs/summ

From playlist GSS2012: Deep Learning, Feature Learning

iMAML: Meta-Learning with Implicit Gradients (Paper Explained)

Gradient-based Meta-Learning requires full backpropagation through the inner optimization procedure, which is a computational nightmare. This paper is able to circumvent this and implicitly compute meta-gradients by the clever introduction of a quadratic regularizer. OUTLINE: 0:00 - Intro

From playlist Papers Explained

Victorita Dolean: An introduction to domain decomposition methods - lecture 2

HYBRID EVENT Recorded during the meeting "Domain Decomposition for Optimal Control Problems" the September 06, 2022 by the Centre International de Rencontres Mathématiques (Marseille, France) Filmmaker: Guillaume Hennenfent Find this video and other talks given by worldwide mathematici

From playlist Jean-Morlet Chair - Gander/Hubert

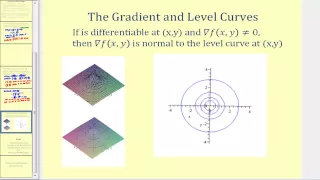

Download the free PDF http://tinyurl.com/EngMathYT A basic tutorial on the gradient field of a function. We show how to compute the gradient; its geometric significance; and how it is used when computing the directional derivative. The gradient is a basic property of vector calculus. NOT

From playlist Engineering Mathematics

This video explains what information the gradient provides about a given function. http://mathispower4u.wordpress.com/

From playlist Functions of Several Variables - Calculus

Yousef Saad: Subspace iteration and variants, revisited

HYBRID EVENT Recorded during the meeting "1Numerical Methods and Scientific Computing" the November 9, 2021 by the Centre International de Rencontres Mathématiques (Marseille, France) Filmmaker: Guillaume Hennenfent Find this video and other talks given by worldwide mathematicians on

From playlist Numerical Analysis and Scientific Computing