This video explains how to determine the probability of an outcome given the odds of an outcome. http://mathispower4u.com

From playlist Probability

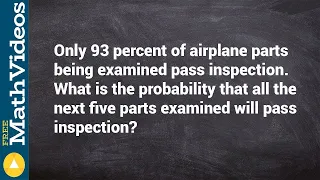

How to find the probability of consecutive events

👉 Learn how to find the conditional probability of an event. Probability is the chance of an event occurring or not occurring. The probability of an event is given by the number of outcomes divided by the total possible outcomes. Conditional probability is the chance of an event occurring

From playlist Probability

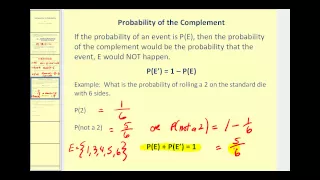

This video introduces probability and determine the probability of basic events. http://mathispower4u.yolasite.com/

From playlist Counting and Probability

Learn to find the or probability from a tree diagram

👉 Learn how to find the conditional probability of an event. Probability is the chance of an event occurring or not occurring. The probability of an event is given by the number of outcomes divided by the total possible outcomes. Conditional probability is the chance of an event occurring

From playlist Probability

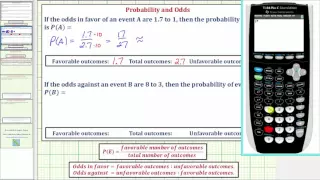

Ex: Determine Probability Given Odds

This video explains how to determine probability given odds.

From playlist Probability

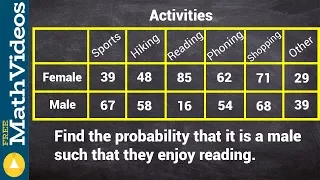

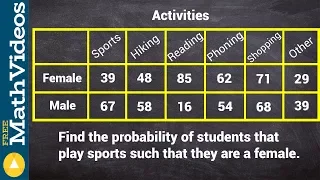

Finding the conditional probability from a two way frequency table

👉 Learn how to find the conditional probability of an event. Probability is the chance of an event occurring or not occurring. The probability of an event is given by the number of outcomes divided by the total possible outcomes. Conditional probability is the chance of an event occurring

From playlist Probability

Probability - Quantum and Classical

The Law of Large Numbers and the Central Limit Theorem. Probability explained with easy to understand 3D animations. Correction: Statement at 13:00 should say "very close" to 50%.

From playlist Physics

Using a contingency table to find the conditional probability

👉 Learn how to find the conditional probability of an event. Probability is the chance of an event occurring or not occurring. The probability of an event is given by the number of outcomes divided by the total possible outcomes. Conditional probability is the chance of an event occurring

From playlist Probability

Garden City Ruby 2014 - Pharmacist or a Doctor - What does your code base need?

By Pavan Sudarshan and Anandha Krishnan You might know of every single code quality & metrics tool in the Ruby ecosystem and what they give you, but do you know: Which metrics do you currently need? Do you really need them? How do you make your team members own them? Wait, there was a me

From playlist Garden City Ruby 2014

Assumption-free prediction intervals for black-box regression algorithms - Aaditya Ramdas

Seminar on Theoretical Machine Learning Topic: Assumption-free prediction intervals for black-box regression algorithms Speaker: Aaditya Ramdas Affiliation: Carnegie Mellon University Date: April 21, 2020 For more video please visit http://video.ias.edu

From playlist Mathematics

From playlist Plenary talks One World Symposium 2020

GTAC 2010: Lightning Talks - Day 1

Google Test Automation Conference 2010 October 28-29, 2010 Lightning Talks by various GTAC 2010 attendees. Slides for this talk are available at https://docs.google.com/leaf?id=0AYfT-BFGDnQkZDVzcmhiNF8yMzZmanpqbTJmYg

From playlist GTAC 2010

GTAC 2015: Coverage is Not Strongly Correlated with Test Suite Effectiveness

http://g.co/gtac Slides: https://docs.google.com/presentation/d/1ttVaFhAfnbO0027Hd_Tz47P5xPHtLf4mLr12xFRMKig/pub Laura Inozemtseva (University of Waterloo) The coverage of a test suite is often used as a proxy for its ability to detect faults. However, previous studies that investigated

From playlist GTAC 2015

05b Machine Learning: Curse of Dimensionality

A lecture on the curse of dimensionality. Motivation for feature selection and dimensionality reduction. This is an undergraduate / graduate course that I teach once a year at The University of Texas at Austin. We build from fundamental spatial / subsurface, geoscience / engineering model

From playlist Machine Learning

What probability is. More free lessons at: http://www.khanacademy.org/video?v=3ER8OkqBdpE

From playlist Old Algebra

MountainWest RubyConf 2014 - Re-thinking Regression Testing by Mario Gonzalez

Regression testing is invaluable to knowing if changes to code have broken the software. However, it always seems to be the case that no matter how many tests you have in your regression buckets, bugs continue to happily creep in undetected. As a result, you are not sure if you can trust y

From playlist MWRC 2014

RubyConf2019 - Digging Up Code Graves in Ruby by Noah Matisoff

RubyConf2019 - Digging Up Code Graves in Ruby by Noah Matisoff As codebases grow, having dead code is a common issue that teams need to tackle. Especially for consumer-facing products that frequently run A/B tests using feature flags, dead code paths can be a significant source of technic

From playlist RubyConf 2019

Determining the conditional probability from a contingency table

👉 Learn how to find the conditional probability of an event. Probability is the chance of an event occurring or not occurring. The probability of an event is given by the number of outcomes divided by the total possible outcomes. Conditional probability is the chance of an event occurring

From playlist Probability

TensorFuzz: Debugging Neural Networks with Coverage-Guided Fuzzing | AISC

For slides and more information on the paper, visit https://aisc.ai.science/events/2019-08-26 Discussion lead: Tahseen Shabab Motivation: Machine learning models are notoriously difficult to interpret and debug. This is particularly true of neural networks. In this work, we introduce a

From playlist Architecture Tuning