Bayesian inference in motor learning

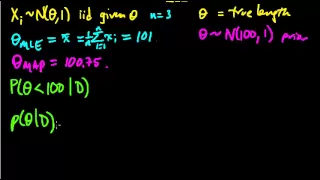

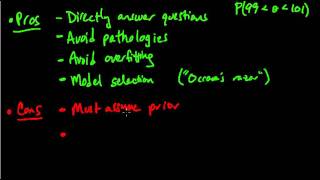

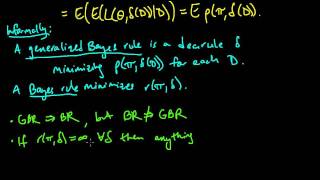

Bayesian inference is a statistical tool that can be applied to motor learning, specifically to adaptation. Adaptation is a short-term learning process involving gradual improvement in performance in response to a change in sensory information. Bayesian inference is used to describe the way the nervous system combines this sensory information with prior knowledge to estimate the position or other characteristics of something in the environment. Bayesian inference can also be used to show how information from multiple senses (e.g. visual and proprioception) can be combined for the same purpose. In either case, Bayesian inference dictates that the estimate is most influenced by whichever information is most certain. (Wikipedia).