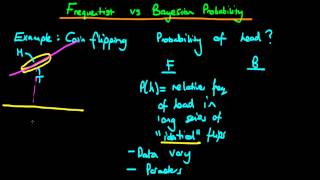

Bayesian vs frequentist statistics probability - part 1

This video provides an intuitive explanation of the difference between Bayesian and classical frequentist statistics. If you are interested in seeing more of the material, arranged into a playlist, please visit: https://www.youtube.com/playlist?list=PLFDbGp5YzjqXQ4oE4w9GVWdiokWB9gEpm Unfo

From playlist Bayesian statistics: a comprehensive course

16 Sequential Bayes: Data order invariance

A proof of the fact that for independent sequences of data, the order which they are received does not affect the posterior distribution; and hence does not affect inference. If you are interested in seeing more of the material, arranged into a playlist, please visit: https://www.youtube.

From playlist Bayesian statistics: a comprehensive course

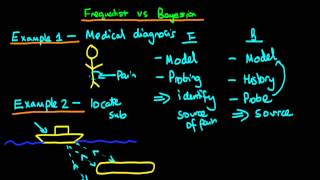

Bayesian vs frequentist statistics

This video provides an intuitive explanation of the difference between Bayesian and classical frequentist statistics. If you are interested in seeing more of the material, arranged into a playlist, please visit: https://www.youtube.com/playlist?list=PLFDbGp5YzjqXQ4oE4w9GVWdiokWB9gEpm Un

From playlist Bayesian statistics: a comprehensive course

An introduction to the use of Bayes' rule in statistics. If you are interested in seeing more of the material, arranged into a playlist, please visit: https://www.youtube.com/playlist?list=PLFDbGp5YzjqXQ4oE4w9GVWdiokWB9gEpm Unfortunately, Ox Educ is no more. Don't fret however as a whol

From playlist Bayesian statistics: a comprehensive course

Bayesian vs frequentist statistics probability - part 2

This video provides a short introduction to the similarities and differences between Bayesian and Frequentist views on probability. If you are interested in seeing more of the material, arranged into a playlist, please visit: https://www.youtube.com/playlist?list=PLFDbGp5YzjqXQ4oE4w9GVWdi

From playlist Bayesian statistics: a comprehensive course

Tim Sullivan: Brittleness and robustness of Bayesian inference for complex systems

Find this video and other talks given by worldwide mathematicians on CIRM's Audiovisual Mathematics Library: http://library.cirm-math.fr. And discover all its functionalities: - Chapter markers and keywords to watch the parts of your choice in the video - Videos enriched with abstracts, b

From playlist Numerical Analysis and Scientific Computing

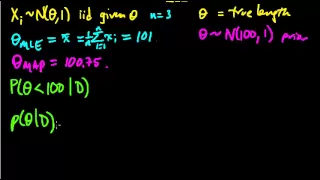

(ML 7.1) Bayesian inference - A simple example

Illustration of the main idea of Bayesian inference, in the simple case of a univariate Gaussian with a Gaussian prior on the mean (and known variances).

From playlist Machine Learning

Conditional Probability: Bayes’ Theorem – Disease Testing (Table and Formula)

This video shows how to determine conditional probability using a table and using Bayes' theorem. @mathipower4u

From playlist Probability

Comparing Bayesian optimization with traditional sampling

Welcome to video #2 of the Adaptive Experimentation series, presented by graduate student Sterling Baird @sterling-baird at the 18th IEEE Conference on eScience in Salt Lake City, UT (Oct 10-14, 2022). In this video Sterling introduces Bayesian Optimization as an alternative method for sa

From playlist Optimization tutorial

PB2 - Population-Based Bandit Optimization

Notion Link: https://ebony-scissor-725.notion.site/Henry-AI-Labs-Weekly-Update-July-15th-2021-a68f599395e3428c878dc74c5f0e1124 Chapters 0:00 Introduction 2:41 Hyperparameter Optimization 3:44 Population-Based Training 6:12 Evolution + Bayesian Optimization 8:54 ASHA 10:48 Results Thanks

From playlist AI Weekly Update - July 15th, 2021!

6 - Bayes' rule in inference - likelihood

Provides an introduction to Bayesian statistics - in particular the likelihood - by running through a simple example of the application of Bayes' rule to the case of inference over a binary parameter, If you are interested in seeing more of the material, arranged into a playlist, please v

From playlist Bayesian statistics: a comprehensive course

Lecture 10D : Making full Bayesian learning practical

Neural Networks for Machine Learning by Geoffrey Hinton [Coursera 2013] Lecture 10D : Making full Bayesian learning practical

From playlist Neural Networks for Machine Learning by Professor Geoffrey Hinton [Complete]

Efficient sampling through variable splitting-inspired (...) - Chainais - Workshop 2 - CEB T1 2019

Pierre Chainais (Ecole Centrale Lille) / 12.03.2019 Efficient sampling through variable splitting-inspired bayesian hierarchical models. Markov chain Monte Carlo (MCMC) methods are an important class of computation techniques to solve Bayesian inference problems. Much research has been

From playlist 2019 - T1 - The Mathematics of Imaging

Lecture 10/16 : Combining multiple neural networks to improve generalization

Neural Networks for Machine Learning by Geoffrey Hinton [Coursera 2013] 10A Why it helps to combine models 10B Mixtures of Experts 10C The idea of full Bayesian learning 10D Making full Bayesian learning practical 10E Dropout: an efficient way to combine neural nets

From playlist Neural Networks for Machine Learning by Professor Geoffrey Hinton [Complete]

Lecture 10.4 — Making full Bayesian learning practical [Neural Networks for Machine Learning]

Lecture from the course Neural Networks for Machine Learning, as taught by Geoffrey Hinton (University of Toronto) on Coursera in 2012. Link to the course (login required): https://class.coursera.org/neuralnets-2012-001

From playlist [Coursera] Neural Networks for Machine Learning — Geoffrey Hinton

Nineteenth Imaging & Inverse Problems (IMAGINE) OneWorld SIAM-IS Virtual Seminar Series Talk

Date: Wednesday, March 24, 2021, 10:00am Eastern Time Zone (US & Canada) Speaker: Marcelo Pereyra, Heriot-Watt University Abstract: Play & Play (PnP) methods have become ubiquitous in Bayesian imaging. These methods derive Minimum Mean Square Error (MMSE) or Maximum A Posteriori (MAP) es

From playlist Imaging & Inverse Problems (IMAGINE) OneWorld SIAM-IS Virtual Seminar Series

Andreas Krause: "Safe and Efficient Exploration in Reinforcement Learning"

Intersections between Control, Learning and Optimization 2020 "Safe and Efficient Exploration in Reinforcement Learning" Andreas Krause - ETH Zurich Abstract: At the heart of Reinforcement Learning lies the challenge of trading exploration -- collecting data for learning better models --

From playlist Intersections between Control, Learning and Optimization 2020

Automated Deep Learning: Joint Neural Architecture and Hyperparameter Search (algorithm) | AISC

Toronto Deep Learning Series, 10 December 2018 Paper: https://arxiv.org/abs/1807.06906 Discussion Lead: Mark Donaldson (Ryerson University) Discussion Facilitator: Masoud Hashemi (RBC) Host: Shopify Date: Dec 10th, 2018 Towards Automated Deep Learning: Efficient Joint Neural Architectu

From playlist Architecture Tuning

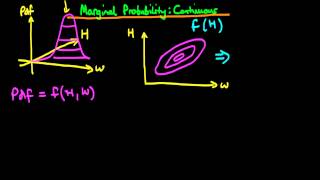

1 - Marginal probability for continuous variables

This explains what is meant by a marginal probability for continuous random variables, how to calculate marginal probabilities and the graphical intuition behind the method. If you are interested in seeing more of the material, arranged into a playlist, please visit: https://www.youtube.c

From playlist Bayesian statistics: a comprehensive course