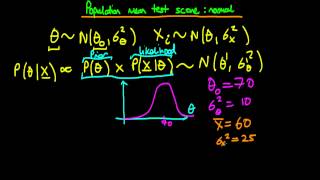

36 - Population mean test score - normal prior and likelihood

This video provides an example of Bayesian inference for the case of a normal prior and normal likelihood. If you are interested in seeing more of the material, arranged into a playlist, please visit: https://www.youtube.com/playlist?list=PLFDbGp5YzjqXQ4oE4w9GVWdiokWB9gEpm Unfortunately,

From playlist Bayesian statistics: a comprehensive course

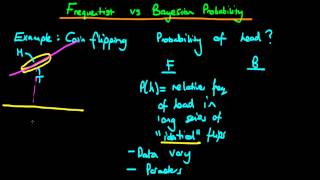

Bayesian vs frequentist statistics probability - part 1

This video provides an intuitive explanation of the difference between Bayesian and classical frequentist statistics. If you are interested in seeing more of the material, arranged into a playlist, please visit: https://www.youtube.com/playlist?list=PLFDbGp5YzjqXQ4oE4w9GVWdiokWB9gEpm Unfo

From playlist Bayesian statistics: a comprehensive course

30 - Normal prior and likelihood - known variance

Provides an introduction to the example which will be used to describe inference for the case of a normal likelihood, with known variance, and a normal prior distribution. If you are interested in seeing more of the material, arranged into a playlist, please visit: https://www.youtube.com

From playlist Bayesian statistics: a comprehensive course

35 - Normal prior and likelihood - posterior predictive distribution

This video provides a derivation of the normal posterior predictive distribution for the case of a normal prior distribution and likelihood. If you are interested in seeing more of the material, arranged into a playlist, please visit: https://www.youtube.com/playlist?list=PLFDbGp5YzjqXQ4o

From playlist Bayesian statistics: a comprehensive course

What is a probability distribution?

An introduction to probability distributions - both discrete and continuous - via simple examples. If you are interested in seeing more of the material, arranged into a playlist, please visit: https://www.youtube.com/playlist?list=PLFDbGp5YzjqXQ4oE4w9GVWdiokWB9gEpm For more information

From playlist Bayesian statistics: a comprehensive course

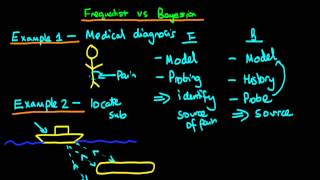

Bayesian vs frequentist statistics

This video provides an intuitive explanation of the difference between Bayesian and classical frequentist statistics. If you are interested in seeing more of the material, arranged into a playlist, please visit: https://www.youtube.com/playlist?list=PLFDbGp5YzjqXQ4oE4w9GVWdiokWB9gEpm Un

From playlist Bayesian statistics: a comprehensive course

What the Heck is Bayesian Stats ?? : Data Science Basics

What's all the hype about Bayesian statistics? My Patreon : https://www.patreon.com/user?u=49277905

From playlist Bayesian Statistics

Bayesian vs frequentist statistics probability - part 2

This video provides a short introduction to the similarities and differences between Bayesian and Frequentist views on probability. If you are interested in seeing more of the material, arranged into a playlist, please visit: https://www.youtube.com/playlist?list=PLFDbGp5YzjqXQ4oE4w9GVWdi

From playlist Bayesian statistics: a comprehensive course

39 - The gamma distribution - an introduction

This video provides an introduction to the gamma distribution: describing it mathematically, discussing example situations which can be modelled using a gamma in Bayesian inference, then going on to discuss how its two parameters affect the shape of the distribution intuitively, and finall

From playlist Bayesian statistics: a comprehensive course

Bayes Billiards with Tom Crawford

Bayes' Theorem allows us to assign a probability to an unknown fact. Thomas Bayes himself described an experiment with a billiard table, which is brilliantly explained by Hannah Fry and Matt Parker here https://www.youtube.com/watch?v=7GgLSnQ48os Brian Cox and David Spiegelhalter did a 1

From playlist Collaborations

Lecture 10/16 : Combining multiple neural networks to improve generalization

Neural Networks for Machine Learning by Geoffrey Hinton [Coursera 2013] 10A Why it helps to combine models 10B Mixtures of Experts 10C The idea of full Bayesian learning 10D Making full Bayesian learning practical 10E Dropout: an efficient way to combine neural nets

From playlist Neural Networks for Machine Learning by Professor Geoffrey Hinton [Complete]

The Surprisingly Effective Magic of Partial Pooling

My Patreon : https://www.patreon.com/user?u=49277905 Partial Pooling Blog Post : https://conductrics.com/prediction-pooling-and-shrinkage 0:00 Intro 7:00 Intuition 9:53 Bayesian Magic Icon References : Coffee shop icons created by smalllikeart - Flaticon https://www.flaticon.com/free-i

From playlist Bayesian Statistics

Supercharging Decision Making with Bayes

Bayesian Decision Theory is a fundamental statistical approach to the problem of pattern classification. It is considered as the ideal pattern classifier and often used as the benchmark for other algorithms because its decision rule automatically minimizes its loss function. PUBLICATION P

From playlist Machine Learning

Bayesian Statistics: An Introduction

See all my videos here: http://www.zstatistics.com/videos/ 0:00 Introduction 2:25 Frequentist vs Bayesian 5:55 Bayes Theorum 10:45 Visual Example 15:05 Bayesian Inference for a Normal Mean 24:30 Conjugate priors 32:55 Credible Intervals

From playlist Statistical Inference (7 videos)

Statistical Rethinking Fall 2017 - week04 lecture08

Week 04, lecture 08 for Statistical Rethinking: A Bayesian Course with Examples in R and Stan, taught at MPI-EVA in Fall 2017. This lecture covers Chapter 6. Slides are available here: https://speakerdeck.com/rmcelreath Additional information on textbook and R package here: http://xcel

From playlist Statistical Rethinking Fall 2017

Statistical Learning: 8.6 Bayesian Additive Regression Trees

Statistical Learning, featuring Deep Learning, Survival Analysis and Multiple Testing You are able to take Statistical Learning as an online course on EdX, and you are able to choose a verified path and get a certificate for its completion: https://www.edx.org/course/statistical-learning

From playlist Statistical Learning

MIT 18.650 Statistics for Applications, Fall 2016 View the complete course: http://ocw.mit.edu/18-650F16 Instructor: Philippe Rigollet In this lecture, Prof. Rigollet talked about Bayesian approach, Bayes rule, posterior distribution, and non-informative priors. License: Creative Commons

From playlist MIT 18.650 Statistics for Applications, Fall 2016

Why not to be afraid of priors (too much), Paul-Christian Bürkner - Bayes@Lund 2018

More info about Bayes@Lund, including slides: https://bayesat.github.io/lund2018/bayes_at_lund_2018.html

From playlist Bayes@Lund 2018

Statistical Rethinking - Lecture 08

Lecture 08 - Model comparison (2) - Statistical Rethinking: A Bayesian Course with R Examples

From playlist Statistical Rethinking Winter 2015

How to run A/B Tests as a Data Scientist!

Let's learn about how & why you should use Bayesian Testing. And some advantages of the Bayesian approach over frequentist approach with REAL data/code. Note: Bayesian Appraoch isn't necessarily better in every way - it is another perspective of looking at data. CODE: https://github.com/a

From playlist A/B Testing