CERIAS Security: On the Evolution of Adversary Models for Security Protocols 1/6

Clip 1/6 Speaker: Virgil D. Gligor · University of Maryland Invariably, new technologies introduce new vulnerabilities which, in principle, enable new attacks by increasingly potent adversaries. Yet new systems are more adept at handling well-known attacks by old adversaries than anti

From playlist The CERIAS Security Seminars 2006

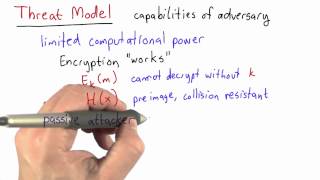

Threat Model Solution - Applied Cryptography

This video is part of an online course, Applied Cryptography. Check out the course here: https://www.udacity.com/course/cs387.

From playlist Applied Cryptography

CERIAS Security: On the Evolution of Adversary Models for Security Protocols 3/6

Clip 3/6 Speaker: Virgil D. Gligor · University of Maryland Invariably, new technologies introduce new vulnerabilities which, in principle, enable new attacks by increasingly potent adversaries. Yet new systems are more adept at handling well-known attacks by old adversaries than anti

From playlist The CERIAS Security Seminars 2006

CERIAS Security: On the Evolution of Adversary Models for Security Protocols 6/6

Clip 6/6 Speaker: Virgil D. Gligor · University of Maryland Invariably, new technologies introduce new vulnerabilities which, in principle, enable new attacks by increasingly potent adversaries. Yet new systems are more adept at handling well-known attacks by old adversaries than anti

From playlist The CERIAS Security Seminars 2006

CERIAS Security: On the Evolution of Adversary Models for Security Protocols 5/6

Clip 5/6 Speaker: Virgil D. Gligor · University of Maryland Invariably, new technologies introduce new vulnerabilities which, in principle, enable new attacks by increasingly potent adversaries. Yet new systems are more adept at handling well-known attacks by old adversaries than anti

From playlist The CERIAS Security Seminars 2006

CERIAS Security: On the Evolution of Adversary Models for Security Protocols 4/6

Clip 4/6 Speaker: Virgil D. Gligor · University of Maryland Invariably, new technologies introduce new vulnerabilities which, in principle, enable new attacks by increasingly potent adversaries. Yet new systems are more adept at handling well-known attacks by old adversaries than anti

From playlist The CERIAS Security Seminars 2006

CERIAS Security: On the Evolution of Adversary Models for Security Protocols 2/6

Clip 2/6 Speaker: Virgil D. Gligor · University of Maryland Invariably, new technologies introduce new vulnerabilities which, in principle, enable new attacks by increasingly potent adversaries. Yet new systems are more adept at handling well-known attacks by old adversaries than anti

From playlist The CERIAS Security Seminars 2006

Threat Model - Applied Cryptography

This video is part of an online course, Applied Cryptography. Check out the course here: https://www.udacity.com/course/cs387.

From playlist Applied Cryptography

Network Security: Classical Encryption Techniques

Fundamental concepts of encryption techniques are discussed. Symmetric Cipher Model Substitution Techniques Transposition Techniques Product Ciphers Steganography

From playlist Network Security

Lecture 16 | Adversarial Examples and Adversarial Training

In Lecture 16, guest lecturer Ian Goodfellow discusses adversarial examples in deep learning. We discuss why deep networks and other machine learning models are susceptible to adversarial examples, and how adversarial examples can be used to attack machine learning systems. We discuss pote

From playlist Top 10 Tutorials and Talks: Adversarial Machine Learning

Near-optimal Evasion of Randomized Convex-inducing Classifiers in Adversarial Environments | AISC

For slides and more information on the paper, visit https://aisc.a-i.science/events/2019-05-23 Discussion lead: Pooria Madani Motivation: Classifiers are often used to detect malicious activities in adversarial environments. Sophisticated adversaries would attempt to find information a

From playlist Generative Models

Adversarial Training (and Testing) | Stanford CS224U Natural Language Understanding | Spring 2021

For more information about Stanford’s Artificial Intelligence professional and graduate programs, visit: https://stanford.io/ai To learn more about this course visit: https://online.stanford.edu/courses/cs224u-natural-language-understanding To follow along with the course schedule and s

From playlist Stanford CS224U: Natural Language Understanding | Spring 2021

Generalizable Adversarial Robustness to Unforeseen Attacks - Soheil Feizi

Seminar on Theoretical Machine Learning Topic: Generalizable Adversarial Robustness to Unforeseen Attacks Speaker: Soheil Feizi Affiliation: University of Maryland Date: June 23, 2020 For more video please visit http://video.ias.edu

From playlist Mathematics

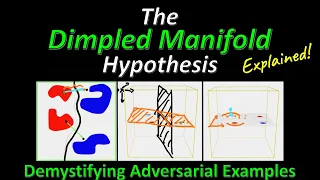

The Dimpled Manifold Model of Adversarial Examples in Machine Learning (Research Paper Explained)

#adversarialexamples #dimpledmanifold #security Adversarial Examples have long been a fascinating topic for many Machine Learning researchers. How can a tiny perturbation cause the neural network to change its output by so much? While many explanations have been proposed over the years, t

From playlist Papers Explained

Adversarial Machine Learning Ian Goodfellow

Google's Ian Goodfellow joined us to share his research. Full slides: http://www.iangoodfellow.com/slides/2018-05-24.pdf

From playlist Top 10 Tutorials and Talks: Adversarial Machine Learning

Attack of the Tails: Yes, you Really can Backdoor Federated Learning

A Google TechTalk, 2020/7/30, presented by Dimitris Papailiopoulos, University of Wisconsin-Madison ABSTRACT: Due to its decentralized nature, Federated Learning (FL) lends itself to adversarial attacks in the form of backdoors during training. A range of FL backdoor attacks have been in

From playlist 2020 Google Workshop on Federated Learning and Analytics

Adversarial Testing | Stanford CS224U Natural Language Understanding | Spring 2021

For more information about Stanford’s Artificial Intelligence professional and graduate programs, visit: https://stanford.io/ai To learn more about this course visit: https://online.stanford.edu/courses/cs224u-natural-language-understanding To follow along with the course schedule and s

From playlist Stanford CS224U: Natural Language Understanding | Spring 2021

Stanford Webinar with Dan Boneh - Hacking AI: Security & Privacy of Machine Learning Models

In this webinar, Professor Dan Boneh discusses recent work at the intersection of cybersecurity and machine learning. Specifically, he explores an area known as “adversarial machine learning” which looks at the stability of machine learning models in the presence of adversarial behavior.

From playlist Artificial Intelligence

(ML 14.4) Hidden Markov models (HMMs) (part 1)

Definition of a hidden Markov model (HMM). Description of the parameters of an HMM (transition matrix, emission probability distributions, and initial distribution). Illustration of a simple example of a HMM.

From playlist Machine Learning

DP-SGD Privacy Analysis is Tight!

A Google TechTalk, presented by Milad Nasr, 2020/08/21 ABSTRACT: Differentially private stochastic gradient descent (DP-SGD) provides a method to train a machine learning model on private data without revealing anything specific to that particular dataset. As a tool, differential privacy

From playlist Differential Privacy for ML